Introduction to Text to Speech AI Voice

Text to speech (TTS) AI voice technology refers to systems that convert written text into spoken audio using artificial intelligence. Over the past decade, TTS has evolved from robotic, monotone outputs to highly realistic, expressive, and human-like voices. In 2025, this technology is a cornerstone of digital transformation, powering accessible experiences, scaling content creation, and enabling new human-computer interactions across devices and platforms.

The importance of text to speech AI voice lies in its ability to break barriers—making digital content accessible to people with disabilities, supporting multilingual communication, and automating voice experiences in business, education, and entertainment. As TTS solutions become more sophisticated and customizable, they are reshaping how we interact with machines and consume information on the web, in mobile apps, and within enterprise workflows.

How Text to Speech AI Voice Works

A Brief History of TTS Technology

Early TTS systems relied on rule-based algorithms and concatenative synthesis, which pieced together pre-recorded snippets of human speech. While functional, their output lacked natural intonation, rhythm, and emotion.

Deep Learning and Neural Networks in Modern TTS

The advent of deep learning, especially neural network models like WaveNet and Tacotron, revolutionized speech synthesis. These models learn the nuances of human speech from vast audio datasets, enabling them to generate voices that sound remarkably natural, with subtle inflections and realistic prosody.

Overview of the Speech Synthesis Pipeline

The modern TTS pipeline typically involves:

- Text preprocessing: Cleansing and normalizing input text.

- Linguistic analysis: Identifying phonemes, stress, and intonation.

- Acoustic modeling: Using neural networks to predict audio features from linguistic input.

- Vocoder step: Synthesizing raw audio waveforms.

Here's a simple example of using Python with Google Cloud Text-to-Speech API:

1import os

2from google.cloud import texttospeech

3

4os.environ["GOOGLE_APPLICATION_CREDENTIALS"] = "/path/to/credentials.json"

5

6client = texttospeech.TextToSpeechClient()

7

8input_text = texttospeech.SynthesisInput(text="Hello, world! This is a TTS AI voice demo.")

9voice = texttospeech.VoiceSelectionParams(

10 language_code="en-US",

11 ssml_gender=texttospeech.SsmlVoiceGender.NEUTRAL

12)

13audio_config = texttospeech.AudioConfig(audio_encoding=texttospeech.AudioEncoding.MP3)

14

15response = client.synthesize_speech(

16 input=input_text, voice=voice, audio_config=audio_config

17)

18

19with open("output.mp3", "wb") as out:

20 out.write(response.audio_content)

21For developers looking to integrate TTS with real-time communication features, leveraging a

Voice SDK

can streamline the process of adding live audio capabilities alongside synthesized speech.Key Features of Modern AI Voice Generators

Realistic and Human-like Voices

Advancements in deep learning speech models have enabled TTS systems to produce voices with natural cadence, emotion, and dynamic range. Developers can now access AI voice generators that deliver nearly indistinguishable speech from real humans, enhancing user experience across various applications. For those building interactive applications, integrating a

javascript video and audio calling sdk

can further enrich the user experience by combining TTS with seamless audio and video communication.Multilingual and Multi-accent Support

Modern TTS engines offer support for dozens of languages and regional accents. This empowers global businesses and content creators to reach broader audiences, enabling seamless localization and inclusivity in digital products. Integrating a

Voice SDK

can also help support multilingual live audio rooms for broader communication.Voice Customization and Cloning Capabilities

Voice customization lets users adjust parameters like pitch, speech rate, and timbre. Voice cloning uses a few minutes of recorded audio to create synthetic voices mimicking specific individuals—useful for brand identity, voiceover continuity, or accessibility personalization. For advanced projects, developers can utilize a

python video and audio calling sdk

to combine TTS with custom voice and video workflows.Popular Applications of Text to Speech AI Voice

Content Creation & Voiceover

Text to speech AI voice tools streamline content production, enabling creators to generate high-quality voiceovers for videos, podcasts, and e-learning resources. This reduces reliance on human voice actors and accelerates project timelines. For creators who want to add live discussions or interviews, embedding a

Voice SDK

can facilitate real-time audio interactions.Accessibility and Assistive Technologies

TTS is critical for accessibility, powering screen readers and assistive apps for users with visual impairments, dyslexia, or other disabilities. Realistic AI voices make digital content more engaging and easier to understand. Additionally, integrating a

phone call api

can help organizations provide accessible voice support directly to users.Business and Customer Service

Businesses leverage AI voices in IVR systems, chatbots, and automated customer support, providing consistent and scalable voice interactions. Multilingual support ensures global reach, while customization allows for unique brand voices. Companies can further enhance their customer service by using a

phone call api

to automate and manage voice-based customer interactions efficiently.Choosing the Right Text to Speech AI Voice Solution

Selecting the best TTS AI voice solution involves evaluating:

- Voice quality: Naturalness, emotion, and clarity

- Language support: Multilingual and accent coverage

- Customization: Ability to adjust voice, pitch, speed, and clone voices

- Cost: Pricing models, free tiers, and scalability

- API integration: Ease of implementation in your tech stack

Security and ethical considerations are paramount, especially for voice cloning. Robust safeguards ensure synthetic voices aren’t used for impersonation or malicious purposes. For seamless integration, developers can

embed video calling sdk

solutions to add both TTS and live communication features to their platforms.

Implementation: How to Integrate Text to Speech AI Voice

Step-by-step Guide for Developers

Integrating TTS AI voice into a web app is straightforward with modern APIs. Here’s a step-by-step overview using JavaScript and the Web Speech API:

1const synth = window.speechSynthesis;

2const utterance = new SpeechSynthesisUtterance("Welcome to the text to speech AI voice demo!");

3utterance.lang = "en-US";

4utterance.pitch = 1.2;

5utterance.rate = 1;

6

7synth.speak(utterance);

8Steps:

- Access the Web Speech API in supported browsers.

- Create a

SpeechSynthesisUtteranceobject with desired text. - Set language, pitch, and rate for customization.

- Call

synth.speak()to play the generated audio.

For more advanced use cases, integrate commercial APIs (Google, AWS, Azure) via SDKs, REST endpoints, or CLI tools. Always handle API keys securely. If you want to experiment with these features, you can

Try it for free

and see how TTS and live audio can be combined in your projects.Tips for Non-Developers Using Online Tools

Many platforms offer free text to speech services with intuitive interfaces. Typical steps:

- Paste or upload your text

- Select language, voice, and adjust settings like speed or pitch

- Preview, then download audio in formats like MP3 or WAV

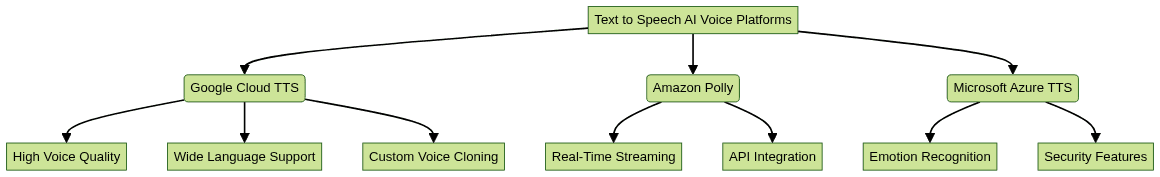

Popular online tools include Google Cloud TTS, Amazon Polly, and various specialized voice AI startups that offer real-time and batch processing. For those seeking to add interactive audio features without coding, a

Voice SDK

can provide prebuilt solutions for live audio rooms and conversations.Future Trends in Text to Speech AI Voice

In 2025, TTS AI voice is advancing rapidly:

- Emotion recognition: Models can convey subtle emotional cues, making interactions more natural and empathetic.

- Real-time speech synthesis: Latency is dropping, enabling live voice responses in apps, games, and virtual assistants.

- Voice cloning: Synthetic voices are becoming more secure and harder to distinguish from real voices, but robust authentication and watermarking are critical.

Challenges include deepfake detection, ethical use, and ensuring diverse voice representation. Solutions are emerging, such as voice watermarking, user consent layers, and open datasets that prioritize inclusivity. As these trends continue, leveraging a

Voice SDK

will be essential for developers aiming to stay ahead in the rapidly evolving voice technology landscape.Conclusion

Text to speech AI voice technology is revolutionizing digital experiences across accessibility, content creation, and business automation. Developers and organizations in 2025 should explore TTS solutions to enhance user engagement, drive accessibility, and stay ahead in a voice-first digital landscape.

Want to level-up your learning? Subscribe now

Subscribe to our newsletter for more tech based insights

FAQ