Introduction to Training Custom LLM for Chatbot

Large language models (LLMs) have revolutionized conversational AI, enabling chatbots to deliver dynamic, human-like interactions. However, off-the-shelf models often lack domain expertise and personalized touch. Training a custom LLM for your chatbot bridges this gap, unlocking tailored user experiences. By adapting a model to specialized knowledge, language style, and business needs, you can create powerful, context-aware chatbots that excel in 2025’s competitive digital landscape.

Understanding Custom LLMs for Chatbots

A custom LLM is a language model fine-tuned on domain-specific data, behaviors, or linguistic styles. Unlike generic models such as GPT-3.5 or ChatGPT, custom LLMs are tailored to understand unique jargon, workflows, or user intents specific to their application. While standard LLMs perform well on general tasks, they often falter in specialized fields like legal, medical, or business support where nuanced understanding is crucial.

For teams interested in enhancing chatbot capabilities with real-time communication, integrating a

Video Calling API

can further enrich user interactions, especially in support or telehealth scenarios.Why Standard LLMs Fall Short

Standard LLMs are trained on vast, public datasets. This generality means they may:

- Misinterpret technical terms

- Provide irrelevant or generic answers

- Lack brand voice or business context

Custom LLM Use Cases

- Business: Automate support with knowledge of company policies and products

- Education: Tutor students with curriculum-aligned responses

- Support: Provide accurate troubleshooting based on in-house documentation

If your chatbot requires advanced audio features, consider leveraging a

Voice SDK

to enable seamless voice interactions alongside text-based conversations.Data Preparation for Training Custom LLM for Chatbot

Gathering and Cleaning Domain-Specific Data

The foundation of a successful custom LLM is high-quality, relevant data. You can source:

- Text: Manuals, wikis, product documentation

- Code: Source repositories, code reviews

- Conversations: Chat logs, transcripts, email exchanges

For applications involving multimedia communication, you might also want to explore a

Live Streaming API SDK

to support live video or event-based interactions within your chatbot ecosystem.Cleaning and Structuring Steps:

- Remove irrelevant or sensitive information

- Standardize formats (e.g., JSONL for text, CSV for tabular data)

- Deduplicate entries

- Label data (if supervised learning)

Generating Synthetic Data with Prompts

If real data is scarce, generate synthetic samples using prompt engineering. This involves crafting prompts to elicit desired responses from a base LLM, creating new, relevant training data.

For developers looking to

embed video calling sdk

directly into their chatbot interface, integrating such features can enhance user engagement and support richer conversational experiences.Example: Synthetic Data Generation with Python (OpenAI API):

1import openai

2openai.api_key = "YOUR_API_KEY"

3

4prompt = "Q: How do I reset my company laptop?\nA:"

5response = openai.Completion.create(

6 engine="text-davinci-003",

7 prompt=prompt,

8 max_tokens=100,

9 temperature=0.7

10)

11print(response["choices"][0]["text"])

12Remember to escape sensitive prompts and replace API keys before production.

Fine-Tuning the LLM for Your Chatbot

Choosing the Right Base Model (OpenAI, Llama, etc.)

Popular LLMs for customization in 2025 include:

- OpenAI GPT-3.5/4: Powerful, robust, easy API

- Meta Llama (Llama 2): Open-source, enterprise-friendly

- Google Gemini: Advanced context handling

For those developing chatbots in Python, utilizing a

python video and audio calling sdk

can streamline the integration of real-time communication features alongside your custom LLM.Comparison Table:

| Model | License | API Support | Training Cost | Community |

|---|---|---|---|---|

| GPT-3.5/4 | Commercial | Yes | $$ | Large |

| Llama 2 | Open-Source | Yes | $ | Growing |

| Gemini | Commercial | Yes | $$ | Moderate |

Fine-Tuning Process Explained

Steps Overview

- Format Training Data (e.g., JSONL:

{ "prompt": ..., "completion": ... }) - Set Up Training Loop (library: Transformers, LlamaIndex, etc.)

- Run Training with Validation (split validation set)

If your tech stack is based on JavaScript, you can easily add communication features with a

javascript video and audio calling sdk

, making your chatbot more interactive and versatile.Python Example: Fine-Tuning with LlamaIndex

1from llama_index import GPTVectorStoreIndex, SimpleDirectoryReader

2

3# Load your cleaned data

4documents = SimpleDirectoryReader('data/cleaned/').load_data()

5index = GPTVectorStoreIndex.from_documents(documents)

6

7# Save your fine-tuned model

8index.save_to_disk('models/custom_llm_index')

9For mobile-first solutions, integrating a

react native video and audio calling sdk

allows you to deliver seamless audio and video experiences within your chatbot app.Reinforcement Learning with Human Feedback (RLHF)

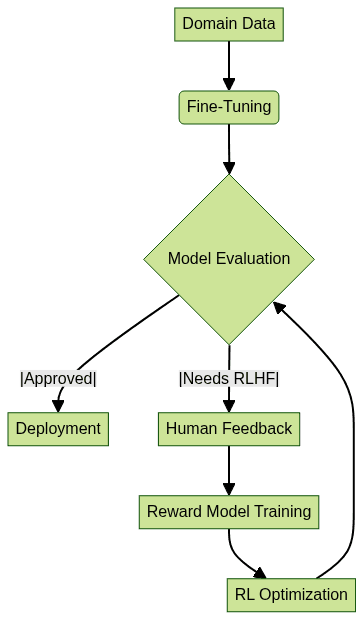

RLHF further refines LLM outputs by leveraging user or expert feedback. After initial fine-tuning, you:

- Collect human ratings on generated responses

- Train a reward model to score outputs

- Use reinforcement learning (e.g., PPO) to improve

When to Use RLHF

- When chatbot responses must align with nuanced user needs or brand tone

- To mitigate bias and improve factuality

For chatbots that need to handle telephony or integrate with calling features, exploring a

phone call api

can help you expand your bot’s capabilities to include voice calls and advanced audio interactions.

Implementation: Building and Integrating Your Custom Chatbot

Setting Up the Environment

Requirements:

- Python 3.10+

- pip

- Virtualenv

- Dependencies:

openai,llama-index,gradio

Sample Folder Structure:

1project-root/

2├── data/

3│ └── cleaned/

4├── models/

5├── chatbot/

6│ └── app.py

7└── requirements.txt

8Integrating the Fine-Tuned LLM into a Chatbot

You can deploy your custom LLM via APIs or integrate with UI frameworks like Gradio for rapid prototyping.

If you’re eager to experiment with these integrations and see how a custom LLM chatbot can work for your business, you can

Try it for free

and start building your own solution today.Example: Loading and Querying Your Custom LLM in a Gradio Chatbot

1import gradio as gr

2from llama_index import GPTVectorStoreIndex

3

4index = GPTVectorStoreIndex.load_from_disk('models/custom_llm_index')

5

6def chatbot_response(user_input):

7 return index.query(user_input)

8

9iface = gr.Interface(fn=chatbot_response, inputs="text", outputs="text")

10iface.launch()

11Testing and Debugging: Use test prompts, logging, and error handling to ensure robust integration. Always validate security around API keys and user data.

Best Practices and Common Pitfalls

- Prompt Engineering: Craft clear, context-rich prompts. Avoid ambiguity.

- Avoiding Overfitting: Use diverse, representative data. Monitor validation loss.

- Data Privacy and Security: Anonymize sensitive info, use secure storage.

- Scaling: Containerize deployments (Docker, K8s) and use API gateways for traffic management.

Evaluating and Improving Your Custom LLM Chatbot

Key Metrics:

- Accuracy: Are answers correct and relevant?

- Relevance: Do responses fit context and user intent?

- User Satisfaction: Collect feedback via surveys or in-app ratings.

Continuous Improvement:

- Regularly retrain with new data

- Monitor production logs for edge cases

- Update prompts and reward models as business needs evolve

Use Cases and Success Stories

- Retail Support Bot: A custom LLM trained on product manuals boosted first-contact resolution rates by 40%.

- Healthcare Assistant: Fine-tuned on medical FAQs, the bot reduced miscommunication and improved patient trust.

- EdTech Tutor: Integrated with curriculum materials, the chatbot delivered personalized study plans, increasing student engagement.

These examples underline the tangible business and user benefits of custom LLM-powered chatbots.

Conclusion: The Future of Custom LLMs for Chatbots

As LLM technology evolves in 2025 and beyond, custom training remains key to delivering exceptional chatbot experiences. Ongoing customization, RLHF, and integration with emerging tools will further enhance user satisfaction and business value. Embrace the future with tailored, intelligent conversational AI.

Want to level-up your learning? Subscribe now

Subscribe to our newsletter for more tech based insights

FAQ