Introduction to Real-Time LLM for Voice

In 2025, the demand for ultra-fast, human-like conversational AI is exploding. Real-time LLM for voice is at the forefront, enabling applications from smart assistants and live translation to enterprise customer service and accessible voice UI. Unlike legacy systems, real-time LLM for voice delivers low-latency, contextually rich, and natural-sounding interactions, redefining how users engage with technology. Whether you're building chatbots, deploying voice AI APIs, or scaling multi-turn dialogue systems, understanding real-time LLM for voice is critical to staying competitive in the evolving landscape of voice-driven applications.

What is a Real-Time LLM for Voice?

A real-time LLM for voice combines the rapid reasoning power of large language models (LLMs) with advanced speech-to-text (STT) and text-to-speech (TTS) capabilities. This convergence powers applications that require both ultra-fast response and deep contextual understanding. Unlike traditional TTS or ASR systems, which often rely on batch processing or rule-based engines, a real-time LLM for voice operates end-to-end, handling streaming audio, natural language understanding, and dynamic voice generation on the fly.

Key differentiators include:

- Low latency: Real-time LLM for voice processes audio in milliseconds, enabling interactive voice UIs and live conversation.

- Contextual awareness: By leveraging LLMs, these systems maintain conversational state, supporting multi-turn dialogue and sentiment monitoring.

- Scalability: Modern architectures support massive concurrent sessions, batch calling, and seamless SDK/API integration—crucial for production-ready AI voice deployments.

The shift from static, pre-recorded audio to intelligent, real-time LLM for voice unlocks new possibilities in conversational AI, live translation, and voice cloning, making it a cornerstone technology in 2025.

Key Technologies Behind Real-Time LLM for Voice

Large Language Models in Voice Applications

Large language models (LLMs) such as GPT-4o, LLaMA-Omni2, and Mist are engineered for deep natural language processing, making them ideal for powering real-time LLM for voice. These models:

- Interpret intent and sentiment from streaming speech.

- Manage context across multi-turn dialogues.

- Personalize responses, enhancing user experience.

With advancements in transfer learning and multilingual support, LLMs deliver human-like TTS and robust speech-to-text conversion, making them indispensable for conversational AI and production-ready AI voice systems. Integrating a

Voice API

can further streamline the deployment of these advanced language models in real-world applications.Streaming Speech Synthesis and Autoregressive Decoders

A critical component of real-time LLM for voice is streaming speech synthesis, often powered by autoregressive decoders. These models generate audio samples frame-by-frame, enabling ultra-fast, natural voice output. For developers looking to add calling features, leveraging a

phone call api

can simplify the process of integrating voice communication into their applications.Here's a simple Python example calling a hypothetical streaming TTS API:

1import requests

2

3url = "https://api.voiceai.com/stream-tts"

4payload = {"text": "Hello, how can I assist you today?", "voice": "en-US"}

5headers = {"Authorization": "Bearer YOUR_API_KEY"}

6

7with requests.post(url, json=payload, headers=headers, stream=True) as response:

8 for chunk in response.iter_content(chunk_size=1024):

9 if chunk:

10 # Send audio chunk to playback or processing pipeline

11 process_audio_chunk(chunk)

12This approach allows for near real-time playback, essential for live voice assistants and chatbots.

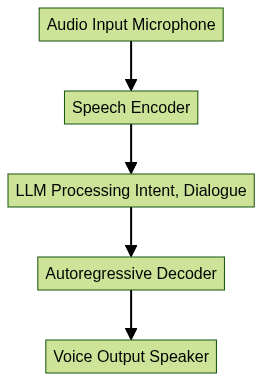

Speech Encoders and Real-Time Processing

Speech encoders transform raw audio signals into embeddings suitable for LLM processing. These encoders must operate with ultra-low latency to support real-time LLM for voice workflows. For those building cross-platform solutions, using a

python video and audio calling sdk

or ajavascript video and audio calling sdk

can accelerate development and ensure robust integration.

This architecture allows for seamless integration of speech-to-text, natural language understanding, and text-to-speech in a unified, production-ready AI voice pipeline.

Practical Use Cases

Conversational AI Agents and Chatbots

Real-time LLM for voice powers the next generation of conversational AI, enabling fluid, natural interactions in customer support, personal assistants, and virtual agents. Multi-turn dialogue, sentiment monitoring, and voice cloning enhance the user experience, making interactions indistinguishable from speaking with a human. By leveraging a

Voice API

, developers can rapidly deploy these conversational agents across platforms.Real-Time Translation and Multilingual Support

Live translation is a major application for real-time LLM for voice. With multilingual LLMs and streaming speech synthesis, users can converse across languages in real time, breaking down communication barriers in global enterprises, travel, and accessibility solutions. For organizations looking to broadcast these interactions, a

Live Streaming API SDK

enables scalable and interactive live voice experiences.Enterprise Voice Operations

Enterprises leverage real-time LLM for voice for batch calling, automated customer outreach, and sentiment analysis in large-scale operations. Integration with compliance frameworks (SOC2, HIPAA) ensures these systems are secure and trustworthy, while SDKs enable rapid deployment across platforms. Utilizing a

Video Calling API

can further enhance enterprise voice operations by providing seamless video and audio communication capabilities.Building with Real-Time LLM for Voice: SDKs and APIs

Integrating Voice AI APIs

Modern voice AI APIs make it straightforward to add real-time LLM for voice to your stack. SDKs are available in Python, Node.js, and other languages, supporting fast prototyping and production deployment. For those seeking a robust

Voice API

, there are solutions that offer low-latency, scalable, and secure voice interactions.Example: Integrating a real-time LLM for

voice API

in Python and Node.jsPython SDK:

1from voiceai import VoiceAI

2

3client = VoiceAI(api_key="YOUR_API_KEY")

4stream = client.start_conversation(language="en-US")

5

6for audio_chunk in stream:

7 handle_audio(audio_chunk)

8Node.js SDK:

1const VoiceAI = require('voiceai');

2const client = new VoiceAI('YOUR_API_KEY');

3

4const stream = client.startConversation({ language: 'en-US' });

5stream.on('data', (audioChunk) => {

6 handleAudio(audioChunk);

7});

8Considerations: Latency, Scalability, and Compliance

- Latency: For mission-critical applications like live translation and voice UI, keeping end-to-end latency below 300ms is vital.

- Scalability: Cloud-native architectures and edge deployment ensure your real-time LLM for voice solution scales with demand, supporting thousands of concurrent sessions.

- Compliance: Production-ready AI voice systems must be SOC2 and HIPAA compliant, with end-to-end encryption, audit logs, and data residency controls.

Challenges and Solutions in Real-Time Voice LLMs

- Latency and Naturalness: Achieving ultra-fast response times without sacrificing voice quality is challenging. Solutions include optimized speech encoders, parallelized inference, and hardware acceleration.

- Accuracy vs. Speed: Real-time LLM for voice must balance translation accuracy and response time, especially in noisy environments or edge cases. Adaptive beam search and contextual re-ranking can help.

- Data Privacy and Compliance: Secure handling of voice data is crucial. Use encrypted APIs, anonymize sensitive data, and adopt robust access controls to stay compliant with SOC2, HIPAA, and GDPR.

- Extensibility and Edge Cases: Real-time LLM for voice systems must handle diverse accents, dialects, and domain-specific terminology. Continual model fine-tuning and user feedback loops drive improvements.

Future Trends in Real-Time LLM for Voice

As we advance into 2025, emerging models like LLaMA-Omni2, Mist, and GPT-4o are pushing the boundaries of real-time LLM for voice. Expect:

- Autonomous agents: AI-powered voice agents handling complex workflows in real time.

- Live translation: Seamless multilingual conversations with near-zero latency.

- Accessibility: Voice-driven applications empowering users with disabilities and enhancing digital inclusivity.

In summary, real-time LLM for voice is transforming the way we interact with machines, enabling richer, faster, and more human-like voice experiences. Staying abreast of these trends is essential for developers, product leaders, and enterprises aiming to deliver next-generation voice solutions. If you're ready to explore these technologies,

Try it for free

and start building the future of conversational AI.Want to level-up your learning? Subscribe now

Subscribe to our newsletter for more tech based insights

FAQ