Introduction to NLP vs LLM AI Agents

Artificial intelligence is rapidly transforming the way software interacts with human language. Three prominent pillars of this evolution are Natural Language Processing (NLP), Large Language Models (LLMs), and AI agents. While NLP forms the foundation for understanding and processing language, LLMs like GPT-4 and Gemini have advanced the field with deep learning and contextual awareness. AI agents, in turn, build on both, offering autonomous, tool-using systems capable of complex, multi-step problem solving.

Understanding the differences and relationships among NLP, LLMs, and AI agents is crucial for developers and businesses seeking to leverage modern AI effectively. As the boundaries blur and technologies converge, knowing their strengths, limitations, and best use cases will be key to successful solutions in 2025.

What is NLP?

Natural Language Processing (NLP) is a subfield of artificial intelligence focused on enabling computers to understand, interpret, and generate human language. NLP combines computational linguistics, statistical modeling, and machine learning to process text and speech data. For developers building communication features, integrating a

python video and audio calling sdk

can enhance applications with real-time audio and video capabilities alongside NLP-powered interactions.Key NLP techniques include:

- Parsing: Analyzing grammatical structure to extract meaning.

- Named Entity Recognition (NER): Identifying entities like names, locations, and organizations in text.

- Sentiment Analysis: Determining the emotional tone behind words.

- Tokenization: Splitting text into words or phrases.

- Part-of-Speech Tagging: Assigning grammatical categories to words.

Traditionally, NLP has powered applications such as:

- Chatbots: Basic rule-based or retrieval-based systems.

- Machine Translation: Language translation via statistical or neural models.

- Text Classification: Categorizing emails, reviews, or documents.

- Information Extraction: Summarizing or pulling structured data from unstructured text.

NLP’s rule-based and statistical approaches paved the way for more flexible, context-aware systems, but often struggled with ambiguity, nuance, and evolving language patterns.

What are LLMs?

Large Language Models (LLMs) are deep learning models—such as OpenAI’s GPT-4, Google’s Gemini, and Meta’s LLaMA—that have been trained on massive text corpora. LLMs use transformer architectures, which leverage attention mechanisms to understand relationships between words across long contexts. When building interactive applications, developers may benefit from a

javascript video and audio calling sdk

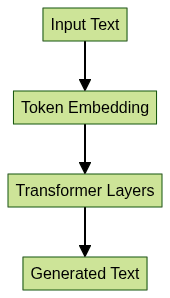

to seamlessly integrate communication features with LLM-powered interfaces.Transformer Architectures

Transformers revolutionized NLP by enabling parallel processing and context-aware learning. The key innovation, self-attention, allows LLMs to weigh the importance of different words regardless of their position:

LLM Capabilities

- Text Generation: Creating human-like responses and content.

- Context Awareness: Maintaining coherence in long conversations.

- Semantic Analysis: Understanding intent, tone, and meaning.

- Few-shot/Zero-shot Learning: Adapting to new tasks with minimal examples.

Limitations

- Hallucinations: Generating plausible but factually incorrect outputs.

- Lack of Real Autonomy: LLMs respond to prompts but don’t act independently.

- Black-box Behavior: Difficult to interpret or control decisions.

LLMs mark a leap from traditional NLP, unlocking richer applications but introducing new challenges.

What are AI Agents?

AI agents, or agentic AI, are autonomous software entities that perceive their environment, make decisions, and perform actions to achieve specific goals. Modern AI agents often integrate NLP, LLMs, and external tools, enabling them to:

- Understand complex instructions

- Maintain memory across interactions

- Plan multi-step actions

- Interact with APIs, databases, or devices

For cross-platform mobile projects, leveraging a

react native video and audio calling sdk

can empower AI agents with real-time communication features, enhancing user engagement and collaboration.Core Features of AI Agents

- Autonomy: Initiate actions without human intervention.

- Memory: Retain context, past interactions, and knowledge over time.

- Multi-step Reasoning: Break down objectives into actionable steps.

- Tool Use: Integrate with external systems (search, code execution, databases).

Real-world Examples

- Virtual Assistants: Advanced chatbots (e.g., GPT-based assistants, Google Assistant).

- Autonomous Systems: Automated customer support, workflow automation bots, recommendation engines.

- Voice Interfaces: Multi-modal agents combining speech, vision, and text. For instance, integrating a

Voice SDK

enables seamless voice interactions in AI-powered applications.

AI agents represent the next layer in AI evolution, combining the language prowess of LLMs with practical autonomy and tool integration.

NLP vs LLM vs AI Agents: Key Differences

Understanding the distinctions between NLP, LLMs, and AI agents is vital for architecture and implementation decisions. Below is a comparative table highlighting essential differences:

| Feature | NLP | LLM | AI Agents |

|---|---|---|---|

| Autonomy | None/Low | None | High |

| Memory | Stateless/Basic | Limited (short-term) | Persistent/Advanced |

| Reasoning | Rule-based/statistical | Pattern recognition | Multi-step/Goal-oriented |

| Context Awareness | Limited | High | Very High |

| Tool Integration | Seldom | Possible via plugins | Native/Extensive |

| Learning | Supervised/Rules | Deep Learning | RL/Supervised/Hybrid |

| Example | Sentiment Analyzer | GPT-4, LLaMA | Autonomous Assistant |

For developers building advanced communication tools, a robust

Video Calling API

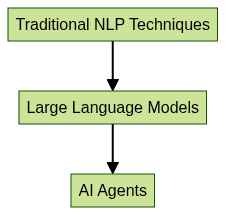

can be integrated with AI agents to facilitate seamless video interactions and collaborative features.Hierarchy: How NLP, LLM, and AI Agents Relate

This diagram illustrates how AI agents are built upon LLMs, which, in turn, extend traditional NLP techniques.

Practical Distinctions

For developers and businesses:

- NLP: Best for structured, well-understood language tasks with clear rules.

- LLMs: Suited for rich, context-heavy interactions and semantic understanding.

- AI Agents: Essential for automating workflows, integrating with tools, and handling complex, multi-step tasks.

If you’re developing mobile solutions, consider the

flutter video and audio calling api

for integrating high-quality communication features, or exploreflutter webrtc

for real-time media streaming in Flutter apps.Implementation: How to Choose and Combine NLP, LLM, and AI Agents

Selecting the right approach depends on task complexity, resource constraints, and desired autonomy. Consider:

- Task Structure: Is the problem rule-based, contextual, or requires autonomy?

- Data Availability: Do you have enough data for LLMs or will rules suffice?

- Integration Needs: Do you need external tool or API access?

- User Experience: Is it a simple classifier or a conversational, proactive assistant?

For projects that require large-scale broadcasting or interactive sessions, a

Live Streaming API SDK

can be combined with AI agents to deliver engaging, real-time experiences to vast audiences.Sample Code: Integrating LLM with an NLP Pipeline

Below is a Python snippet using Hugging Face transformers and spaCy for a hybrid pipeline:

1import spacy

2from transformers import pipeline

3

4# Load spaCy for NER

5nlp = spacy.load("en_core_web_sm")

6

7# Load LLM for text generation

8generator = pipeline("text-generation", model="gpt2")

9

10text = "OpenAI's GPT-4 is changing the AI landscape in 2025."

11

12# Step 1: Named Entity Recognition

13entities = [(ent.text, ent.label_) for ent in nlp(text).ents]

14print("Entities:", entities)

15

16# Step 2: Use LLM to generate summary

17summary = generator(f"Summarize: {text}", max_length=40, num_return_sequences=1)

18print("Summary:", summary[0]['generated_text'])

19Use Cases

- Customer Support: Agents triage tickets, answer FAQs, and escalate issues. For telephony integration, a

phone call api

can enable direct voice communication between customers and support agents. - Automation: LLMs parse emails, agents trigger workflows.

- Voice Interfaces: NLP converts speech to text, LLM interprets, agent executes commands.

Synergies and Future Trends

The future lies in combining these technologies:

- Multi-modal Agents: Integrate vision, speech, and text.

- Memory-Augmented LLMs: Persistent context for ongoing tasks.

- Reinforcement Learning: Agents learn optimal strategies over time.

Challenges and Limitations of Each Approach

- NLP: Rule-based systems can be brittle and struggle with novel inputs or language nuances. Updating rules or retraining models for new patterns can be labor-intensive.

- LLMs: Prone to hallucinations (confident but incorrect responses), they lack true autonomy and may generate unpredictable outputs. Computational demands are significant.

- AI Agents: High implementation complexity, safety concerns, and robust real-world integration (e.g., error handling, external tool reliability) are ongoing challenges. Ensuring ethical behavior and security is critical as agents gain autonomy.

Conclusion: The Evolving Landscape of NLP, LLMs, and AI Agents

NLP, LLMs, and AI agents represent a spectrum of capabilities, from language understanding to autonomous, tool-using intelligence. Their convergence is reshaping software development in 2025, offering new opportunities—and challenges. Mastering their distinctions and synergies is essential for anyone building next-generation AI solutions.

Ready to build smarter, more connected applications?

Try it for free

and start integrating advanced communication and AI features today!Want to level-up your learning? Subscribe now

Subscribe to our newsletter for more tech based insights

FAQ