Introduction to Cerebras Voice Agent

The rapid evolution of artificial intelligence has ushered in a new era of real-time voice applications. At the forefront is the Cerebras voice agent, a platform that enables developers to deliver ultra-fast conversational AI experiences. Cerebras Systems, renowned for its AI hardware accelerators and inference platforms, empowers voice AI agents that prioritize low-latency and high-throughput interactions.

In 2025, seamless real-time voice interaction is critical across industries—whether powering digital twins, AI avatars, or scalable customer support bots. The Cerebras voice agent ecosystem is designed to minimize delay, optimize the user experience, and integrate with the latest large language models (LLMs), speech-to-text (STT), and text-to-speech (TTS) technologies. This article explores the technical landscape, architecture, and practical steps to deploy your own Cerebras voice agent.

Understanding the Cerebras Voice Agent Ecosystem

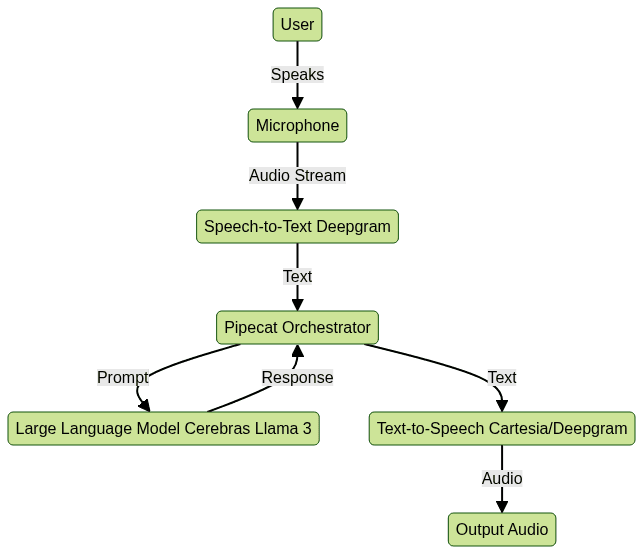

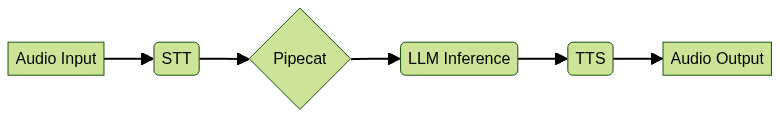

A robust Cerebras voice agent leverages multiple components for natural, real-time conversations:

- Large Language Models (LLMs): State-of-the-art models like Llama 3 (8B/70B), running on Cerebras infrastructure, bring contextual understanding and generative power.

- Speech-to-Text (STT): Services such as Deepgram convert audio input to text with minimal latency.

- Text-to-Speech (TTS): Solutions like Cartesia or Deepgram synthesize natural-sounding responses.

- Orchestration Tools: Frameworks like Pipecat manage the end-to-end workflow, while Cerebrium provides scalable, serverless deployment.

For developers seeking to add real-time voice features, integrating a

Voice SDK

can streamline the process of building interactive audio experiences. These components are integrated via APIs and open-source frameworks, supporting model quantization, OpenAI compatibility, and LiveKit for real-time streaming. The result is a flexible, modular stack capable of supporting education, sales, and customer support use cases.

Why Low Latency Matters in Voice AI

Latency is a key determinant in the effectiveness of voice AI. In the context of a Cerebras voice agent, low-latency enables near-instantaneous responses, crucial for maintaining natural dialogue flow and high user engagement.

When evaluating solutions for real-time communication, many teams compare

livekit alternatives

to ensure optimal performance and flexibility for their use case.Technical vs. Perceptual Latency

- Technical latency: The actual time taken by each component (STT, LLM inference, TTS) to process data.

- Perceptual latency: The delay as perceived by the user, typically anything above 300ms can degrade the experience.

Industry Benchmarks

- Best-in-class target: End-to-end latency < 500ms for real-time voice agents.

- Cerebras: Achieves industry-leading throughput and low time-to-first-token (TTFT), outperforming many GPU-based solutions. This is critical for applications like live sales coaching or educational tutoring where delays can break conversational immersion.

For applications that require direct calling capabilities, integrating a

phone call api

can further enhance user engagement and communication efficiency.Cerebras Inference: Powering Real-Time Voice Agents

The Cerebras platform is engineered for ultra-fast LLM inference, enabling developers to build voice agents with minimal delay. By leveraging the Cerebras Wafer-Scale Engine (WSE) and optimized software stacks, developers can run large models such as Llama 3-70B with remarkable efficiency.

For those building more complex solutions, a

Live Streaming API SDK

can be integrated to support interactive, large-scale audio and video events alongside voice AI.TTFT and TPS in Conversational Pipelines

- TTFT (Time-to-First-Token): Measures how quickly the model produces the first output token after receiving a prompt. Lower TTFT means the user hears a response quicker.

- TPS (Tokens per Second): Indicates how fast the model can continue generating tokens, impacting the overall response speed.

A typical conversational AI pipeline involves chaining STT, LLM, and TTS. Cerebras reduces bottlenecks at the LLM stage, vital for real-time voice applications.

If your application is built with Python, leveraging a

python video and audio calling sdk

can accelerate development and ensure robust, cross-platform communication features.Real-World Benchmark

In public benchmarks (2025), Cerebras LLMs consistently deliver TTFTs under 200ms and TPS rates exceeding 100 tokens/sec for Llama 3-8B, even in high-concurrency scenarios.

Example: API Call to Cerebras LLM

Below is a Python example of making a real-time inference call to a Cerebras LLM endpoint:

1import requests

2

3API_URL = "https://api.cerebras.ai/v1/llm/infer"

4API_KEY = "your_cerebras_api_key"

5

6payload = {

7 "model": "llama3-8b",

8 "prompt": "What is the weather like today?",

9 "max_tokens": 64

10}

11headers = {

12 "Authorization": f"Bearer {API_KEY}",

13 "Content-Type": "application/json"

14}

15

16response = requests.post(API_URL, json=payload, headers=headers)

17print(response.json())

18Building Your Own Cerebras Voice Agent: Step-by-Step Guide

Setting Up the Environment

To start building a Cerebras voice agent, set up your environment as follows:

- Sign up for Cerebrium to deploy serverless AI endpoints and manage resources.

- Obtain API keys for Cerebras LLM, Deepgram (STT/TTS), and Cartesia (if using).

- Install dependencies: Recommended stack includes Python 3.9+,

requests,deepgram-sdk, andpipecatfor orchestration.

For real-time audio features, consider integrating a

Voice SDK

to simplify the implementation of live audio rooms and enhance user interaction.1pip install requests deepgram-sdk pipecat

2Data Pipeline: Audio to Text

For real-time STT, Deepgram provides a reliable, low-latency API. The following snippet shows how to stream audio and receive text transcription:

If your use case involves phone-based interactions, adding a

phone call api

can help bridge traditional telephony with modern AI-driven workflows.1from deepgram import Deepgram

2import asyncio

3

4DG_API_KEY = "your_deepgram_api_key"

5dg_client = Deepgram(DG_API_KEY)

6

7async def transcribe_audio(audio_stream):

8 response = await dg_client.transcription.prerecorded({

9 "buffer": audio_stream,

10 "mimetype": "audio/wav"

11 })

12 return response["results"]["channels"][0]["alternatives"][0]["transcript"]

13Running LLM Inference with Cerebras

Once you have the transcribed text, you can run inference on Cerebras LLMs (such as Llama 3) via the vLLM interface. Prompt engineering is key for context preservation.

Developers looking to add interactive voice features can also benefit from a

Voice SDK

, which offers tools for building scalable, real-time audio applications.1import requests

2

3API_URL = "https://api.cerebras.ai/v1/llm/infer"

4API_KEY = "your_cerebras_api_key"

5

6def query_llm(prompt, model="llama3-8b"):

7 payload = {

8 "model": model,

9 "prompt": prompt,

10 "max_tokens": 128

11 }

12 headers = {

13 "Authorization": f"Bearer {API_KEY}",

14 "Content-Type": "application/json"

15 }

16 response = requests.post(API_URL, json=payload, headers=headers)

17 return response.json()["response"]

18Text-to-Speech Synthesis

For TTS, you can use Cartesia or Deepgram's TTS API, optimized for low-latency streaming. Key API considerations:

- Choose neural voices for naturalness.

- Stream audio in small chunks for real-time playback.

To further enhance your voice agent, integrating a

Voice SDK

can provide seamless audio streaming and advanced moderation features.Example using Deepgram TTS:

1import requests

2

3def synthesize_speech(text):

4 url = "https://api.deepgram.com/v1/speak"

5 headers = {

6 "Authorization": "Token your_deepgram_api_key",

7 "Content-Type": "application/json"

8 }

9 payload = {

10 "text": text,

11 "voice": "en-US-Wavenet-D"

12 }

13 response = requests.post(url, json=payload, headers=headers)

14 return response.content # WAV audio bytes

15Orchestrating the Workflow with Pipecat

Pipecat acts as the workflow orchestrator, connecting STT, LLM, and TTS into a seamless pipeline. It handles async task management, context passing, and error handling.

For those exploring alternatives to LiveKit, reviewing

livekit alternatives

can help identify the best fit for real-time orchestration and streaming requirements.

A sample Pipecat configuration might look like:

1{

2 "stt": "deepgram",

3 "llm": "cerebras_llama3",

4 "tts": "deepgram_tts",

5 "orchestrator": "pipecat"

6}

7Deployment and Scaling with Cerebrium

Deploying your Cerebras voice agent serverlessly with Cerebrium allows you to:

- Scale elastically based on concurrent sessions

- Monitor latency and throughput in real time

- Automate resource allocation for cost and performance optimization

If you're planning to host large-scale events or interactive sessions, integrating a

Live Streaming API SDK

can help you reach broader audiences with minimal latency.Cerebrium integrates with monitoring tools and supports rolling updates, ensuring your voice agent remains highly available and performant even under heavy load.

Advanced Topics: Customization, Latency Optimization, and Use Cases

Model Quantization and Hardware Acceleration

Cerebras supports quantized LLMs for reduced memory footprint and faster inference. Hardware acceleration via Wafer-Scale Engine ensures minimal TTFT and consistent TPS.

Fine-Grained Voice Controls

Modern TTS APIs offer parameters for emotion, pitch, and speed. This allows building digital twins or AI avatars with distinct personalities, enhancing user engagement.

For developers who prefer Python, a

python video and audio calling sdk

offers a streamlined approach to integrating both video and audio calling into your AI-powered applications.Real-World Use Cases

- Sales Coaches: Real-time objection handling and script suggestions

- Tutors: Adaptive, conversational learning experiences

- Customer Support Bots: Instantaneous, context-aware responses

These use cases benefit from high-throughput inference and workflow orchestration, driving business value with minimal engineering friction.

Conclusion and Next Steps

The Cerebras voice agent ecosystem in 2025 empowers developers to create ultra-fast, scalable conversational AI. With advanced LLMs, low-latency pipelines, and seamless orchestration, you can build next-generation voice bots for any domain. Start experimenting with Cerebras APIs, Pipecat, and Cerebrium today—your users will notice the difference in every interaction.

Ready to build your own real-time voice AI?

Try it for free

and unlock the full potential of modern voice technology.Want to level-up your learning? Subscribe now

Subscribe to our newsletter for more tech based insights

FAQ