Introduction to LLM Voice Assistants

The field of voice AI has evolved rapidly, transitioning from basic rule-based systems to advanced conversational agents. Early voice assistants were limited by static command sets and rigid responses. With the rise of large language models (LLMs), voice assistants have become far more intelligent, adaptive, and context-aware.

LLM voice assistants are AI agents that leverage large language models as their core, enabling natural, dynamic conversations. They process spoken language, extract meaning, and respond with human-like fluency. These systems integrate speech-to-text (STT), LLMs, and text-to-speech (TTS) for seamless interaction.

The trending adoption of LLM voice assistants is driven by breakthroughs in speech language models, real-time AI voice assistant technology, accessibility, and the promise of truly conversational AI. In 2025, they are revolutionizing how we interact with computers, devices, and software.

How LLM Voice Assistants Work

Key Components and Architecture

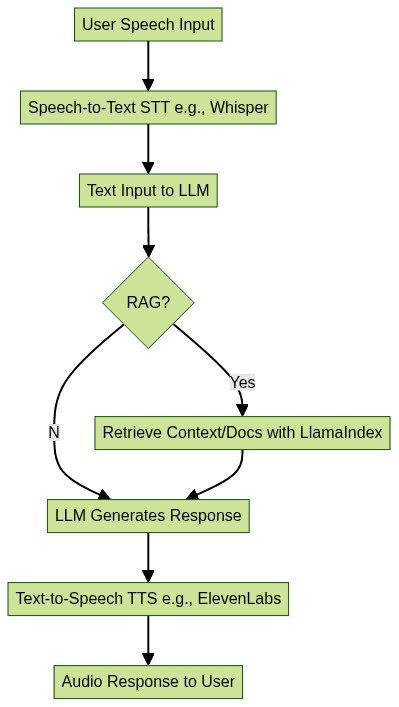

LLM voice assistants rely on several interconnected components:

- Speech-to-Text (STT): Converts spoken language into text. Modern STT models like Whisper offer robust multilingual support and high accuracy.

- Large Language Model (LLM): Acts as the conversational brain—understanding context, generating responses, and managing dialogue flow.

- Retrieval-Augmented Generation (RAG): Enhances LLMs by allowing retrieval of relevant documents or facts, increasing accuracy and specificity.

- Text-to-Speech (TTS): Synthesizes natural, expressive voice responses. Solutions like ElevenLabs and Bark provide high-fidelity, emotive speech output.

Developers can streamline the integration of these components using a

Voice SDK

, which simplifies the process of building real-time conversational interfaces and ensures reliable audio handling.Architecture Flowchart

Typical Tech Stack

A robust LLM voice assistant leverages a modern stack:

- Whisper (STT): Open-source, accurate, multilingual.

- LlamaIndex (RAG): Retrieval-augmented generation for enhanced factuality.

- ElevenLabs or Bark (TTS): Realistic, expressive speech synthesis.

- React.js: For building interactive voice-enabled UIs.

- Flask: Lightweight backend API for orchestrating the pipeline.

For developers working with Python, a

python video and audio calling sdk

can be integrated to enable seamless audio and video communication features within custom voice assistant applications.Code Snippet: Simple LLM-Powered Voice Assistant Pipeline

1import whisper

2from transformers import pipeline

3import sounddevice as sd

4import numpy as np

5

6# Load models

7stt_model = whisper.load_model("base")

8language_model = pipeline("text-generation", model="gpt-3.5-turbo")

9# You would also load TTS and RAG modules here

10

11def record_audio(duration=5, fs=16000):

12 print("Speak now...")

13 audio = sd.rec(int(duration * fs), samplerate=fs, channels=1)

14 sd.wait()

15 return np.squeeze(audio)

16

17def voice_assistant():

18 audio = record_audio()

19 stt_result = stt_model.transcribe(audio)

20 user_text = stt_result["text"]

21 response = language_model(user_text)[0]["generated_text"]

22 # Pass response to TTS and play audio here

23 print("Assistant:", response)

24

25voice_assistant()

26Unique Features and Capabilities of LLM Voice Assistants

Natural, Context-Aware Conversation

Unlike early voice bots, LLM voice assistants excel at natural conversation. Leveraging deep context retention, they remember previous dialogue turns, user preferences, and ongoing topics. This enables:

- Multi-turn conversation (not just single commands)

- Memory of context across sessions

- Personalized, adaptive responses

LLM-powered voice AI can integrate with context-aware frameworks such as LlamaIndex to retrieve relevant information dynamically, enabling smarter, on-topic discussions. For real-time group conversations or interactive audio rooms, leveraging a

Voice SDK

can provide scalable infrastructure and advanced moderation features.Emotional Intelligence and Voice Presence

Modern LLM voice assistants are equipped with emotional intelligence, adapting their tone and inflection based on user input. Techniques inspired by projects like Sesame and Spirit LM allow these assistants to:

- Detect emotional cues in voice and text

- Modulate speech output for empathy, excitement, or calmness

- Create a sense of "voice presence"—making the interaction feel more human

Voice presence and emotional nuance are key differentiators in 2025, with advanced TTS models supporting expressive and contextually aware speech. Integrating a

Voice SDK

can further enhance these capabilities by providing low-latency audio processing and real-time voice effects.Multilingual and Accessibility Features

LLM voice assistants democratize technology access:

- Support dozens of languages (multilingual Whisper, TTS models)

- Enable accessibility for users with disabilities (voice navigation, screen reading)

- Provide inclusive UX through projects like LlamaIndex, which can index multilingual corpora and enable universal search

Accessibility in voice AI is a major focus, ensuring that voice assistants are usable by everyone regardless of native language or physical ability. For developers aiming to reach users on Android devices, exploring

webrtc android

solutions can help deliver seamless, real-time voice and video communication experiences.Choosing the Right LLM for Voice Assistants

Factors to Consider

When selecting an LLM for your voice assistant, weigh the following:

- Latency (TTFT): Time-to-first-token (TTFT) and real-time response are critical for natural dialogue

- Throughput: How many concurrent users or queries the system can handle

- Cost per Token: Affects scalability and operational costs

- Accuracy (MMLU Benchmark): High accuracy on the MMLU benchmark ensures reliable understanding and generation

- Privacy: Local vs cloud inference, user data retention

- Open-source vs Proprietary: Balance between flexibility, cost, and feature set

For applications requiring direct phone connectivity, integrating a

phone call api

enables your voice assistant to initiate and manage calls programmatically, expanding its utility in customer support and enterprise scenarios.LLM Comparison Table

| Model | TTFT (ms) | Throughput (tokens/s) | MMLU (%) | Open-Source | Privacy Options | Cost per Token |

|---|---|---|---|---|---|---|

| GPT-4 | 600 | 50 | 86 | No | Cloud Only | High |

| Llama 3 | 350 | 75 | 81 | Yes | Local/Cloud | Medium |

| Gemini Pro | 400 | 65 | 83 | No | Cloud Only | Medium |

| Mistral Large | 300 | 80 | 79 | Yes | Local/Cloud | Low |

Note: Values are illustrative; consult latest benchmarks for up-to-date figures in 2025.

Building Your Own LLM Voice Assistant: Step-by-Step

Setting Up the Environment

To build a modern LLM voice assistant, start by preparing your environment:

- Hardware: Recent GPU or Apple Silicon recommended for local inference

- Software: Python 3.10+, Node.js for UI, Docker for containerization

- Model Weights: Download latest Llama 3, Whisper, Bark/ElevenLabs weights

For developers building web-based interfaces, a

javascript video and audio calling sdk

can be integrated to enable real-time communication features directly in the browser.Environment Setup Code Snippet

1# Clone repositories

2 git clone https://github.com/openai/whisper.git

3 git clone https://github.com/facebookresearch/llama

4 git clone https://github.com/suno-ai/bark.git

5

6# Create virtual env and install dependencies

7python3 -m venv venv

8source venv/bin/activate

9pip install -r whisper/requirements.txt

10pip install transformers flask llama-index

11Integrating STT, LLM, and TTS

The core pipeline connects STT, LLM, and TTS modules:

- Capture user audio

- Transcribe with Whisper

- Query LLM with text (optionally use LlamaIndex for RAG)

- Synthesize response with Bark or ElevenLabs

To add robust video communication, consider integrating a

Video Calling API

, which allows your voice assistant to support both audio and video conferencing capabilities for a more interactive user experience.Integration Pipeline Code Snippet

1import whisper

2from transformers import AutoModelForCausalLM, AutoTokenizer

3from bark import generate_audio

4import sounddevice as sd

5

6# Load models

7stt_model = whisper.load_model("base")

8tokenizer = AutoTokenizer.from_pretrained("meta-llama/Llama-3-8B")

9language_model = AutoModelForCausalLM.from_pretrained("meta-llama/Llama-3-8B")

10

11def run_pipeline():

12 # Record audio

13 audio = record_audio()

14 stt_result = stt_model.transcribe(audio)

15 user_text = stt_result["text"]

16 # LLM generation

17 inputs = tokenizer(user_text, return_tensors="pt")

18 outputs = language_model.generate(**inputs, max_length=128)

19 response_text = tokenizer.decode(outputs[0], skip_special_tokens=True)

20 # TTS

21 audio_response = generate_audio(response_text)

22 sd.play(audio_response, samplerate=24000)

23 sd.wait()

24

25run_pipeline()

26Deployment and Hosting

- Local Deployment: Maximum privacy, lower latency, but hardware requirements

- Cloud Deployment: Scalability, easier updates, but privacy and cost considerations

- Edge Deployment: For IoT and mobile, balancing latency and privacy

For teams looking to quickly add video calling to their applications, using an

embed video calling sdk

can dramatically reduce development time by providing prebuilt UI components and seamless integration.Security and Privacy Considerations

- Encrypt communication between UI and backend

- Store minimal user data

- Prefer open-source, on-device models for sensitive applications

Current Limitations and Future Directions

Despite rapid progress, LLM voice assistants face several challenges:

- Latency: Real-time interaction requires further TTFT and throughput optimization

- Emotional Nuance: Capturing subtle emotions in both recognition and synthesis is still limited

- Context Limits: Memory window and context length remain technical hurdles

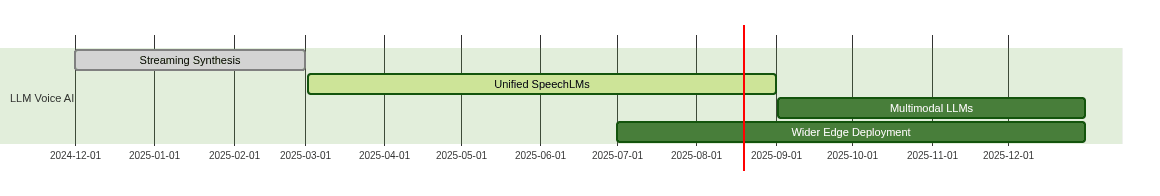

Emerging Innovations:

- Streaming Speech Synthesis: Reduces response time, enables more fluid conversations

- Speech Language Models (SpeechLMs): End-to-end models unifying STT, LLM, and TTS

- Multimodal LLMs: Integrate vision, audio, and text for richer interactions

For developers interested in experimenting with these innovations, you can

Try it for free

and explore the latest SDKs and APIs to accelerate your voice AI projects.Future Voice AI Roadmap (2025+)

Conclusion

In 2025, LLM voice assistants stand at the frontier of conversational AI, merging advanced language understanding, emotional intelligence, and universal accessibility. By leveraging open-source tools, robust architectures, and innovative models, developers can build voice agents that are more natural, responsive, and inclusive than ever before. The journey ahead promises even richer, more human interactions with our technology.

Want to level-up your learning? Subscribe now

Subscribe to our newsletter for more tech based insights

FAQ