Introduction to LLM Voice AI

Voice AI technology has rapidly evolved in recent years, and large language models (LLMs) are at the forefront of this transformation. LLM Voice AI leverages advanced artificial intelligence to generate human-like speech, understand natural language, and enable real-time, highly adaptive voice interactions. This shift is redefining how businesses, developers, and creators approach voice applications, unlocking new potential for content creation, accessibility, and customer engagement.

In today's digital-first landscape, the demand for lifelike, multi-language, and emotionally expressive voice AI is soaring. Whether powering virtual assistants, producing AI voiceovers, or enabling interactive gaming experiences, LLM Voice AI delivers unmatched realism and flexibility. Businesses and creators benefit from scalable, cost-effective solutions that can be tailored to their brand and audience, making voice a truly programmable interface for the modern world.

What is LLM Voice AI?

LLM Voice AI combines the power of large language models with cutting-edge voice synthesis technology. At its core, an LLM (such as GPT-4 or similar architectures) processes vast amounts of language data to understand context, intent, and emotional tone. By integrating these models with advanced text-to-speech (TTS) engines and voice synthesis algorithms, LLM Voice AI generates speech that is not only intelligible but also nuanced and expressive.

Traditional TTS systems relied on pre-recorded audio clips and rigid rule-based processing, resulting in robotic, monotone voices. In contrast, LLM-powered voice AI dynamically interprets input text, infers emotion, and adapts its delivery in real time. This enables applications ranging from AI voice generators for audiobooks to highly interactive customer service bots. Developers looking to build such experiences can leverage a

Voice SDK

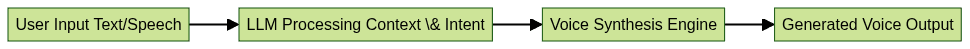

to integrate advanced voice features seamlessly into their applications.LLM Voice AI Workflow

The workflow starts with user input, which is analyzed by the LLM to extract meaning and emotion. The processed data is then fed into a voice synthesis engine, producing natural-sounding speech tailored to the context.

Key Features of LLM Voice AI Platforms

Natural Language Processing and Emotion Recognition

Modern LLM Voice AI platforms excel at natural language processing (NLP), allowing them to understand complex sentence structures, idioms, and user intent. Beyond mere word recognition, these systems detect emotional cues, adjusting tone, pitch, and pacing to match the sentiment of the message. This advanced emotion recognition makes voice AI sound more empathetic and engaging—critical for applications like customer support, therapy bots, and virtual companions. For developers interested in adding these capabilities to their products, integrating a

Voice SDK

can provide robust tools for real-time voice analysis and synthesis.Multi-language and Real-time Capabilities

Global reach demands multi-language support. LLM Voice AI platforms now offer real-time translation and synthesis across dozens of languages and dialects. Paired with ultra-low latency, this enables seamless cross-lingual conversations, live voice dubbing, and international accessibility for apps and services. Businesses that require voice communication features can also benefit from a

phone call api

, which streamlines the process of adding high-quality audio calling to their platforms.Voice Cloning and Custom Voice Models

Voice cloning allows businesses and creators to generate custom voice models—replicating a specific individual's voice or designing a unique brand persona. Using a small dataset of target voice samples, LLM Voice AI can produce highly accurate, scalable clones for narration, dialogue, or branded audio experiences. This is valuable for games, audiobooks, and personalized virtual assistants. Developers working with Python can explore the

python video and audio calling sdk

to implement advanced voice and video features alongside custom voice models.API and SDK Integration for Developers

Developers can harness LLM Voice AI through robust APIs and SDKs, making it easy to embed voice synthesis and recognition in web, mobile, and desktop applications. For those building with JavaScript, the

javascript video and audio calling sdk

offers a quick start to integrating both video and audio calling functionalities. Here's a sample Python integration using a hypothetical LLM Voice AI SDK:1import llm_voice_sdk

2

3# Authenticate with your API key

4client = llm_voice_sdk.Client(api_key="YOUR_API_KEY")

5

6# Synthesize text to speech

7audio_stream = client.synthesize(

8 text="Hello! Welcome to our AI-powered platform.",

9 voice="en-US-female-1",

10 emotion="friendly"

11)

12

13# Play or save the generated audio

14with open("output.wav", "wb") as out_file:

15 out_file.write(audio_stream.read())

16Top Use Cases for LLM Voice AI

Content Creation and Voiceovers

AI voice generators powered by LLMs streamline the production of audiobooks, podcasts, YouTube videos, and marketing campaigns. Creators can generate professional-quality voiceovers in multiple languages and tones, rapidly iterating scripts and styles without the need for costly studio time or voice talent. For those looking to broadcast content to a wider audience, a

Live Streaming API SDK

can be integrated to enable real-time interactive streaming experiences.E-learning and Accessibility

LLM Voice AI enhances accessibility by delivering high-quality text-to-speech experiences for e-learning platforms, digital textbooks, and assistive technologies. Real-time voice synthesis supports visually impaired users and enables interactive, language-adaptive educational content. Integrating a

Voice SDK

can further enhance these platforms by providing seamless audio communication and collaboration features.Gaming and Interactive Media

Game developers leverage AI voice for games to create dynamic NPC dialogue, real-time character responses, and immersive storytelling. Voice cloning allows for personalized player avatars, while emotion-aware LLMs enhance realism and engagement in interactive media. For multiplayer and social gaming experiences, utilizing a

Video Calling API

can add another layer of interaction, allowing players to communicate in real time.Business Applications (Customer Service, Marketing)

Voice AI is revolutionizing customer service with intelligent voice bots capable of understanding intent and responding empathetically. In marketing, brands deploy custom voices for outbound calls, personalized messaging, and virtual brand ambassadors—boosting engagement and consistency across channels.

How to Implement LLM Voice AI

Choosing the Right Platform

Select a voice AI platform based on language support, emotion recognition, API/SDK availability, and licensing terms. Evaluate factors like scalability, pricing, developer resources, and the ability to create custom voice models. Leading providers include OpenAI, Google Cloud Text-to-Speech, and independent platforms specializing in voice cloning and real-time synthesis. If you're ready to experiment with these tools, you can

Try it for free

and explore the possibilities firsthand.Step-by-Step Integration Process

Here's a typical workflow for integrating an LLM Voice AI API into an application:

- Sign up and obtain API credentials from your chosen platform.

- Install the SDK or set up REST API access in your development environment.

- Authenticate requests using your API key or OAuth credentials.

- Send text input with desired voice and emotion parameters.

- Receive and process the audio output (stream or file).

Below is a sample integration using a RESTful API in JavaScript:

1const fetch = require("node-fetch");

2

3const apiKey = "YOUR_API_KEY";

4const apiUrl = "https://api.llmvoiceai.com/v1/synthesize";

5

6fetch(apiUrl, {

7 method: "POST",

8 headers: {

9 "Authorization": `Bearer ${apiKey}`,

10 "Content-Type": "application/json"

11 },

12 body: JSON.stringify({

13 text: "Welcome to the future of voice AI!",

14 voice: "en-GB-male-2",

15 emotion: "excited"

16 })

17})

18 .then(res => res.arrayBuffer())

19 .then(audioBuffer => {

20 // Process or play the audio buffer

21 console.log("Audio received");

22 })

23 .catch(err => console.error(err));

24Tips for Optimal Results

- Fine-tune emotion and pacing parameters for natural delivery

- Regularly update training data for custom voices

- Test across devices and languages for consistent quality

- Monitor API latency for real-time applications

- Stay updated with SDK releases and platform documentation

Future Trends in LLM Voice AI

The landscape of LLM Voice AI is rapidly advancing. By 2025, expect:

- Greater realism and contextual awareness: AI will interpret context, sarcasm, and nuanced emotions for even more lifelike interactions.

- Scalable custom voice creation: Democratized tools will enable anyone to create bespoke voices, supporting inclusivity and brand identity.

- Ethical frameworks and regulations: As voice cloning and AI voice synthesis become mainstream, new governance will emerge to address consent, security, and misuse.

Developers and businesses should stay informed about evolving standards and best practices to harness the benefits of LLM Voice AI responsibly.

Conclusion

LLM Voice AI stands at the intersection of language understanding and voice synthesis, empowering developers, creators, and businesses to deliver richer, more accessible, and engaging user experiences. As platforms become more powerful and accessible in 2025, now is the time to explore LLM Voice AI solutions and unlock the next generation of voice-driven applications.

Want to level-up your learning? Subscribe now

Subscribe to our newsletter for more tech based insights

FAQ