Introduction to LLM for Virtual Assistants

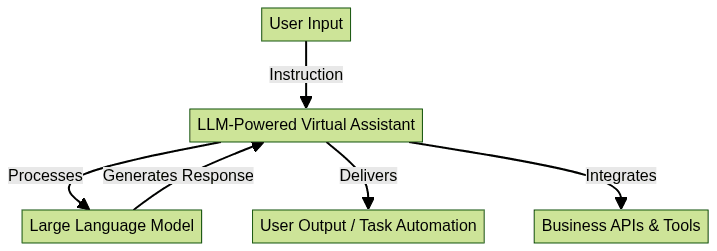

Large Language Models (LLMs) are transforming the landscape of digital productivity, particularly for virtual assistants. An LLM for virtual assistants refers to a sophisticated AI system trained on massive datasets to understand, generate, and interact in natural language. As organizations and individuals seek smarter ways to automate tasks, enhance productivity, and manage information workflows, LLM-powered virtual assistants have emerged as essential tools.

The importance of LLM for virtual assistants lies in their ability to process complex instructions, perform multi-step reasoning, and personalize interactions. With advancements in generative AI, these assistants can now automate repetitive tasks, summarize documents, schedule meetings, and integrate seamlessly with business tools. The result: sharper efficiency, reduced manual workload, and a new standard for what AI productivity tools can achieve in 2025.

How LLMs Power Virtual Assistants

What is an LLM and How Does It Work?

A Large Language Model (LLM) is an AI system trained on vast amounts of text data. It uses deep learning architectures—primarily transformer neural networks—to understand context, predict text, and generate human-like responses. LLMs, such as GPT or Claude, rely on billions of parameters to capture nuances in language, enabling them to interpret and fulfill diverse user requests.

LLM Capabilities in Modern Virtual Assistants

Modern LLM-powered virtual assistants leverage these capabilities to execute a wide range of tasks: from drafting emails and summarizing reports to answering technical queries and automating workflows. By understanding context and intent, these assistants can handle multitasking, provide rapid responses, and adapt to evolving user needs.

For example, integrating a

Video Calling API

allows virtual assistants to schedule and initiate video meetings directly within business workflows, enhancing real-time collaboration.Real-World Examples: Vizzy, ChatGPT, Claude, etc.

Industry leaders like Vizzy, ChatGPT, and Claude exemplify the power of LLM for virtual assistants. Vizzy integrates with business tools to automate project management. ChatGPT serves as a conversational AI for coding, scheduling, and content creation. Claude excels in privacy-centric deployments. These solutions highlight how generative AI assistants are being utilized across industries, and many now support

Voice SDK

integration for seamless voice-based interactions.

Key Features of LLM-Powered Virtual Assistants

Task Automation & Multitasking

LLM for virtual assistants brings automation to a new level. These AI assistants can handle complex workflows—from scheduling meetings across multiple calendars to generating status updates or compiling reports. By leveraging LLMs, virtual assistants automate repetitive tasks, freeing up time for higher-value activities and improving overall productivity. For teams looking to

embed video calling sdk

into their assistant platforms, this feature can further streamline communication and task management.Personalization and Custom Knowledge Base

Personalization is key in today’s digital environment. With LLM customization, virtual assistants can access a custom knowledge base, learn from previous interactions, and tailor responses to individual preferences. This enables a more human-like and relevant experience, whether for an executive assistant AI or a business AI assistant.

Integration with Files, Apps, and APIs

Integration capabilities are at the core of LLM-powered virtual assistants. Through robust API integration, these assistants connect with calendars, spreadsheets, CRMs, and even image editing AI tools. This interoperability empowers users to automate tasks with LLM across multiple platforms and data sources, driving efficiency and reducing manual errors. Developers can leverage solutions like

python video and audio calling sdk

,javascript video and audio calling sdk

, orreact native video and audio calling sdk

to extend assistant capabilities across different tech stacks.Rapid, Contextual Responses

With advanced prompt engineering and contextual awareness, LLM assistants deliver fast, accurate, and contextually rich responses to queries—be it scheduling, data extraction, or knowledge retrieval.

1import requests

2

3api_url = "https://api.llm-assistant.com/v1/query"

4payload = {

5 "prompt": "Summarize this week\'s project updates.",

6 "context": "team_reports"

7}

8headers = {"Authorization": "Bearer YOUR_API_KEY"}

9

10response = requests.post(api_url, json=payload, headers=headers)

11print(response.json())

12Popular LLMs for Virtual Assistants: A Comparative Overview

OpenAI GPT, Anthropic Claude, Google Gemini, Meta Llama, Mistral

Several top-tier LLMs compete in the virtual assistant market, each offering unique strengths:

- OpenAI GPT (e.g., GPT-4, GPT-4o): Renowned for its versatility, coding capabilities, and conversational fluency. Widely used in commercial AI assistants.

- Anthropic Claude: Prioritizes safety, privacy, and ethical AI. Favored in industries with sensitive data needs.

- Google Gemini: Integrates deeply with Google Workspace and offers advanced search and summarization features.

- Meta Llama: Open-source LLM with strong multilingual and customizable frameworks, popular among developers.

- Mistral: Lightweight, efficient, and suitable for on-premises AI deployments where speed and resource usage are critical.

For applications requiring large-scale broadcasts or interactive sessions, integrating a

Live Streaming API SDK

can further enhance the reach and engagement of LLM-powered virtual assistants.Feature Comparison Table

| LLM | Strengths | Integration | Privacy | Customization |

|---|---|---|---|---|

| OpenAI GPT | Coding, Conversational | Wide | Moderate | High |

| Claude | Ethics, Privacy | Moderate | High | Medium |

| Gemini | Google Ecosystem | Deep | Moderate | Medium |

| Llama | Open-source, Multilingual | Flexible | High | High |

| Mistral | Lightweight, Fast | Moderate | High | Medium |

Sample Prompt for Different LLMs

1prompts = {

2 "OpenAI GPT": "Draft a summary of today\'s financial report.",

3 "Claude": "Summarize with privacy-sensitive phrasing the attached document.",

4 "Gemini": "Provide highlights from the Google Sheets Q2 report.",

5}

6for llm, prompt in prompts.items():

7 print(f"{llm}: {prompt}")

8Choosing the Right LLM for Your Needs

Selecting the best LLM for virtual assistants depends on your integration needs, privacy requirements, and desired level of customization. For mobile and cross-platform solutions, consider utilizing

flutter video and audio calling api

to ensure seamless communication features.Implementing LLM for Your Virtual Assistant

Steps to Integrate LLM into a Virtual Assistant

- Select Your LLM: Choose a model (e.g., GPT, Claude) based on your goals and constraints.

- Set Up API Access: Register for API keys and review documentation.

- Build the Assistant Layer: Develop middleware that manages prompts, captures user context, and formats LLM responses.

- Integrate with Business Tools: Use API integration to connect to calendars, CRMs, and custom knowledge bases.

- Test and Iterate: Continuously refine prompts, responses, and user flows for optimal performance.

1import openai

2

3openai.api_key = "YOUR_OPENAI_API_KEY"

4

5response = openai.ChatCompletion.create(

6 model="gpt-4",

7 messages=[

8 {"role": "system", "content": "You are an AI executive assistant."},

9 {"role": "user", "content": "Schedule a meeting with the finance team for next Thursday at 2pm."}

10 ]

11)

12print(response["choices"][0]["message"]["content"])

13Customizing the Assistant with Your Own Data

LLM customization lets you enhance the assistant with proprietary data. By building a custom knowledge base AI, you can feed relevant documents, FAQs, or business logic into the LLM, ensuring responses align with your unique workflows and terminology.

This can be achieved using vector databases, embedding APIs, or fine-tuning pipelines, all designed to extend the assistant’s capabilities while maintaining security. For Android developers, exploring

webrtc android

solutions can help implement real-time communication features within virtual assistant apps.Privacy, Security, and Control

Ensure your LLM-powered virtual assistant complies with data privacy standards. Use encryption, on-premises deployment, and robust access controls to safeguard sensitive information and maintain user trust.

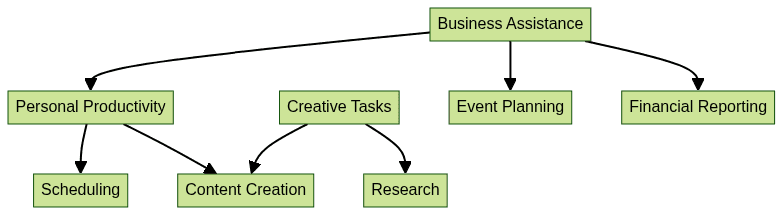

Use Cases: How LLMs Transform Virtual Assistance

Business Assistance (Event Planning, Financial Reports, Image Editing)

LLM for virtual assistants streamlines business operations by automating calendar management, generating financial summaries, and even supporting image editing AI workflows. Assistants can consolidate data from various sources, plan events, and deliver actionable insights, making them indispensable for executive and business teams. To experience these capabilities firsthand, you can

Try it for free

and see how LLM-powered solutions enhance productivity.Personal Productivity (Scheduling, Reminders, Travel Planning)

Generative AI assistants optimize personal productivity by handling scheduling conflicts, setting contextual reminders, and planning travel itineraries. With LLM use cases expanding, users can delegate routine tasks, ensuring they stay organized and focused on core priorities.

Creative Tasks (Content Creation, Brainstorming, Research)

LLM-powered virtual assistants are also invaluable for creative professionals. They can draft blog posts, suggest new campaign ideas, and conduct rapid research. By automating ideation and content workflows, these assistants unlock new levels of creative output.

Challenges and Future Trends for LLM in Virtual Assistants

While LLM for virtual assistants has made significant strides, challenges remain. Current limitations include occasional hallucinations, lack of domain-specific knowledge, and the need for ongoing prompt engineering. Ethical considerations—such as bias, transparency, and user privacy—are also at the forefront as adoption grows.

Looking ahead to 2025, expect advancements in LLM customization, multimodal capabilities (combining text, image, and voice), and improved privacy controls. The future of AI-driven virtual assistance promises greater autonomy, reliability, and integration with complex digital ecosystems.

Conclusion: The Value of LLM for Virtual Assistants

LLM for virtual assistants is redefining digital productivity—enabling smarter automation, personalization, and rapid task execution. By embracing LLM-powered solutions, organizations and individuals unlock new levels of efficiency. Now is the time to experiment, integrate, and harness the power of large language models for your virtual assistant needs in 2025.

Want to level-up your learning? Subscribe now

Subscribe to our newsletter for more tech based insights

FAQ