Introduction to AI Voice Agents in Word Error Rate (WER)

What is an AI Voice Agent

?

AI Voice Agents are sophisticated software entities designed to interact with users through voice commands. They leverage technologies like speech recognition, natural language processing, and text-to-speech to understand and respond to user queries. These agents are increasingly prevalent in various industries, offering seamless interaction and automation.

Why are they important for the Word Error Rate (WER) industry?

In the realm of speech recognition, Word Error Rate (WER) is a crucial metric. It measures the accuracy of transcriptions by comparing the number of errors in a recognized sentence to the total number of words. AI Voice Agents can provide real-time insights and analysis of WER, helping developers and businesses optimize their speech recognition systems.

Core Components of a Voice Agent

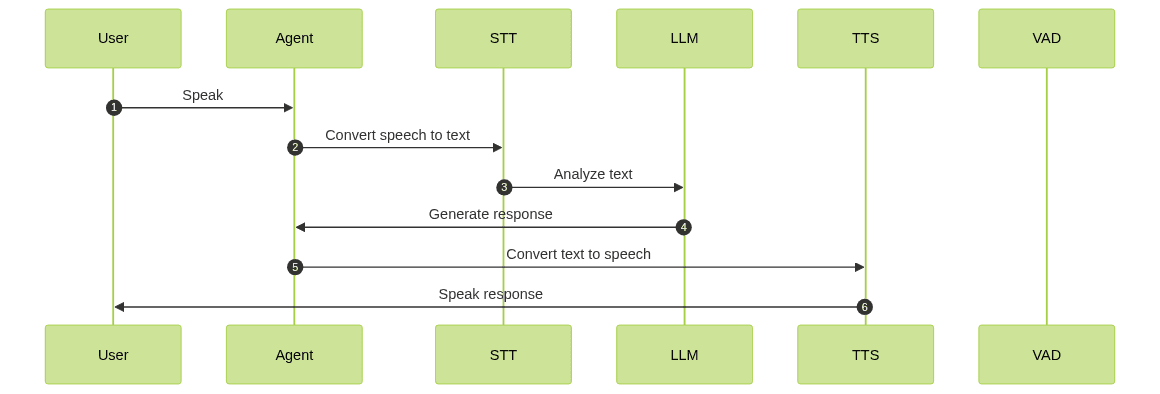

A typical AI

Voice Agent

consists of components like Speech-to-Text (STT) engines, Language Models (LLM), Text-to-Speech (TTS) systems, and VoiceActivity Detection

(VAD). These components work together to process audio input, understand user intent, and generate appropriate responses.What You'll Build in This Tutorial

In this tutorial, you will learn how to build an AI

Voice Agent

using the VideoSDK framework. This agent will specialize in providing information and insights about Word Error Rate (WER).Architecture and Core Concepts

High-Level Architecture Overview

The architecture of an AI

Voice Agent

involves several key components working in unison. The agent listens to user input, processes it through a series of pipelines, and responds intelligently.

Understanding Key Concepts in the VideoSDK Framework

Agent

The

Agent class is the core representation of your AI Voice Agent. It defines the agent's behavior and interactions.Cascading Pipeline in AI Voice Agents

The

CascadingPipeline manages the flow of audio processing, from Speech-to-Text (STT) to Language Model (LLM) to Text-to-Speech (TTS).VAD & Turn Detector for AI Voice Agents

Voice Activity Detection (VAD) and Turn Detection are crucial for determining when the agent should listen and respond. They help in managing the conversation flow effectively.

Setting Up the Development Environment

Prerequisites

Ensure you have Python 3.11+ installed and a VideoSDK account. Sign up at app.videosdk.live to obtain necessary API credentials.

Step 1: Create a Virtual Environment

To manage dependencies, create a virtual environment:

1python -m venv venv

2source venv/bin/activate # On Windows use `venv\Scripts\activate`

3Step 2: Install Required Packages

Install the VideoSDK and other necessary packages:

1pip install videosdk-agents videosdk-plugins

2Step 3: Configure API Keys in a .env file

Create a

.env file in your project directory and add your VideoSDK API keys:1VIDEOSDK_API_KEY=your_api_key_here

2VIDEOSDK_SECRET_KEY=your_secret_key_here

3Building the AI Voice Agent: A Step-by-Step Guide

Step 4.1: Generating a VideoSDK Meeting ID

To interact with the agent, you need a meeting ID. Use the VideoSDK API to generate one.

Step 4.2: Creating the Custom Agent Class

1class MyVoiceAgent(Agent):

2 def __init__(self):

3 super().__init__(instructions=agent_instructions)

4 async def on_enter(self): await self.session.say("Hello! How can I help?")

5 async def on_exit(self): await self.session.say("Goodbye!")

6This class extends the

Agent class, setting up initial instructions and defining entry and exit behaviors.Step 4.3: Defining the Core Pipeline

1pipeline = CascadingPipeline(

2 stt=DeepgramSTT(model="nova-2", language="en"),

3 llm=OpenAILLM(model="gpt-4o"),

4 tts=ElevenLabsTTS(model="eleven_flash_v2_5"),

5 vad=SileroVAD(threshold=0.35),

6 turn_detector=TurnDetector(threshold=0.8)

7)

8The

CascadingPipeline integrates various plugins to process audio input and generate responses.Step 4.4: Managing the Session and Startup Logic

1async def start_session(context: JobContext):

2 agent = MyVoiceAgent()

3 conversation_flow = ConversationFlow(agent)

4 session = [AI Voice Agent Sessions](https://docs.videosdk.live/ai_agents/core-components/agent-session)(

5 agent=agent,

6 pipeline=pipeline,

7 conversation_flow=conversation_flow

8 )

9 try:

10 await context.connect()

11 await session.start()

12 await asyncio.Event().wait()

13 finally:

14 await session.close()

15 await context.shutdown()

16This function manages the agent session, ensuring it connects and starts correctly.

Running and Testing the Agent

Step 5.1: Running the Python Script

Execute the script using:

1python main.py

2Step 5.2: Interacting with the Agent in the AI Agent Playground

Use the playground link provided in the console to interact with your agent and test its capabilities.

Advanced Features and Customizations

Extending Functionality with Custom Tools

Explore adding new capabilities to your agent by integrating custom plugins and tools.

Exploring Other Plugins

The VideoSDK framework supports various plugins for enhanced functionality. Experiment with different configurations to suit your needs.

Troubleshooting Common Issues

API Key and Authentication Errors

Ensure your API keys are correctly configured in the

.env file.Audio Input/Output Problems

Check your microphone and speaker settings if you encounter audio issues.

Dependency and Version Conflicts

Ensure all dependencies are compatible with Python 3.11+ and the VideoSDK framework.

Conclusion

Summary of What You've Built

You've successfully built an AI Voice Agent capable of analyzing Word Error Rate (WER) using the VideoSDK framework.

Next Steps and Further Learning

Consider exploring additional features and customizations to enhance your agent's capabilities further.

Want to level-up your learning? Subscribe now

Subscribe to our newsletter for more tech based insights

FAQ