Introduction to AI Voice Agents in How to Scale a Voice Agent

AI Voice Agents are sophisticated systems designed to interact with users through voice commands, processing natural language to perform tasks, answer questions, or provide information. These agents are increasingly important in industries looking to scale their customer service, enhance user experience, and automate routine tasks.

What is an AI Voice Agent?

An AI Voice Agent is a software application capable of understanding and responding to human speech. It utilizes technologies such as Speech-to-Text (STT), Text-to-Speech (TTS), and Natural Language Processing (NLP) to interpret and generate human-like responses.

Why are they important for the "How to Scale a Voice Agent" industry?

Voice agents are crucial in scaling operations, especially in customer service sectors, by handling multiple queries simultaneously, reducing wait times, and providing consistent service. They enable businesses to manage increased loads without a proportional increase in resources.

Core Components of a Voice Agent

- Speech-to-Text (STT): Converts spoken language into text, utilizing plugins like the

Deepgram STT Plugin for voice agent

. - Large Language Model (LLM): Processes and understands the text to generate responses, often enhanced by tools such as the

OpenAI LLM Plugin for voice agent

. - Text-to-Speech (TTS): Converts text back into spoken language, with options like the

ElevenLabs TTS Plugin for voice agent

.

What You'll Build in This Tutorial

In this guide, you will learn to build a scalable AI Voice Agent using the VideoSDK framework, integrating STT, LLM, and TTS technologies to create a responsive and efficient voice interaction system. For a quick setup, refer to the

Voice Agent Quick Start Guide

.Architecture and Core Concepts

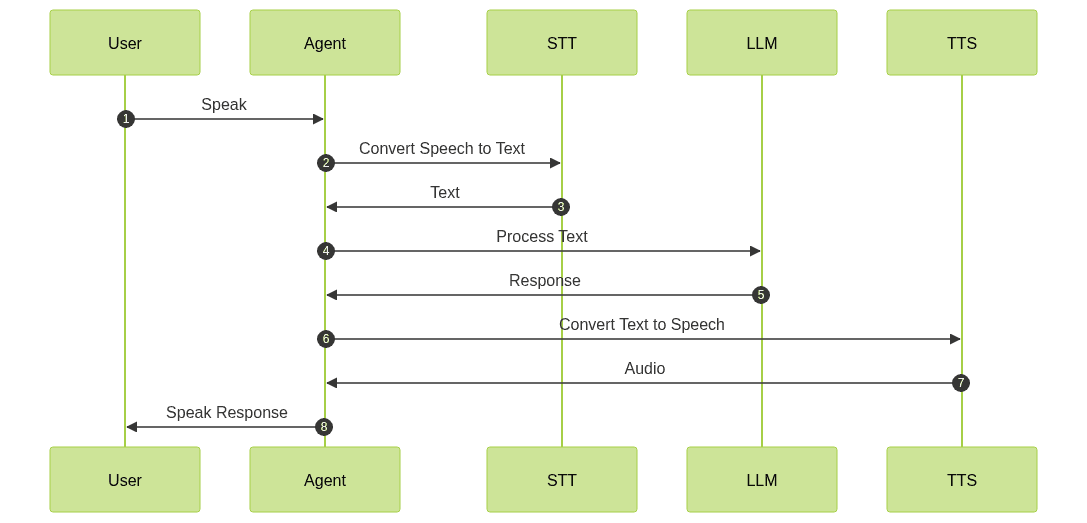

High-Level Architecture Overview

The architecture of an AI Voice Agent involves a seamless flow of data from user speech input to agent response output. The process begins with capturing audio input, converting it to text, processing the text to understand the user's intent, generating a response, and finally converting the response back to speech. This process is managed effectively through a

Cascading pipeline in AI voice Agents

.

Understanding Key Concepts in the VideoSDK Framework

- Agent: The core class representing your bot, responsible for handling interactions.

- CascadingPipeline: Manages the flow of audio processing through STT, LLM, and TTS.

- VAD & TurnDetector: These components help the agent determine when to listen and respond, ensuring smooth interaction. The

Turn detector for AI voice Agents

is crucial for this functionality.

Setting Up the Development Environment

Prerequisites

To begin, ensure you have Python 3.11+ installed and a VideoSDK account, which you can create at the VideoSDK website.

Step 1: Create a Virtual Environment

Create a virtual environment to manage dependencies:

1python -m venv voice_agent_env

2source voice_agent_env/bin/activate # On Windows use `voice_agent_env\\Scripts\\activate`

3Step 2: Install Required Packages

Use pip to install the necessary packages:

1pip install videosdk

2pip install python-dotenv

3Step 3: Configure API Keys in a .env File

Create a

.env file in your project directory and add your VideoSDK API key:1VIDEOSDK_API_KEY=your_api_key_here

2Building the AI Voice Agent: A Step-by-Step Guide

Here's the complete code to get started:

1import asyncio, os

2from videosdk.agents import Agent, AgentSession, CascadingPipeline, JobContext, RoomOptions, WorkerJob, ConversationFlow

3from videosdk.plugins.silero import SileroVAD

4from videosdk.plugins.turn_detector import TurnDetector, pre_download_model

5from videosdk.plugins.deepgram import DeepgramSTT

6from videosdk.plugins.openai import OpenAILLM

7from videosdk.plugins.elevenlabs import ElevenLabsTTS

8from typing import AsyncIterator

9

10# Pre-downloading the Turn Detector model

11pre_download_model()

12

13agent_instructions = "{\n \"persona\": \"scalable voice agent consultant\",\n \"capabilities\": [\n \"Provide strategies and best practices for scaling voice agents\",\n \"Offer insights into infrastructure and technology choices\",\n \"Guide on optimizing performance and user experience\",\n \"Advise on handling increased user load and data management\"\n ],\n \"constraints\": [\n \"You are not a software developer and cannot provide specific code implementations\",\n \"Always recommend consulting with a technical expert for detailed architecture planning\",\n \"Emphasize the importance of security and privacy compliance\"\n ]\n}"

14

15class MyVoiceAgent(Agent):

16 def __init__(self):

17 super().__init__(instructions=agent_instructions)

18 async def on_enter(self): await self.session.say("Hello! How can I help?")

19 async def on_exit(self): await self.session.say("Goodbye!")

20

21async def start_session(context: JobContext):

22 # Create agent and conversation flow

23 agent = MyVoiceAgent()

24 conversation_flow = ConversationFlow(agent)

25

26 # Create pipeline

27 pipeline = CascadingPipeline(

28 stt=DeepgramSTT(model="nova-2", language="en"),

29 llm=OpenAILLM(model="gpt-4o"),

30 tts=ElevenLabsTTS(model="eleven_flash_v2_5"),

31 vad=SileroVAD(threshold=0.35),

32 turn_detector=TurnDetector(threshold=0.8)

33 )

34

35 session = AgentSession(

36 agent=agent,

37 pipeline=pipeline,

38 conversation_flow=conversation_flow

39 )

40

41 try:

42 await context.connect()

43 await session.start()

44 # Keep the session running until manually terminated

45 await asyncio.Event().wait()

46 finally:

47 # Clean up resources when done

48 await session.close()

49 await context.shutdown()

50

51def make_context() -> JobContext:

52 room_options = RoomOptions(

53 # room_id="YOUR_MEETING_ID", # Set to join a pre-created room; omit to auto-create

54 name="VideoSDK Cascaded Agent",

55 playground=True

56 )

57

58 return JobContext(room_options=room_options)

59

60if __name__ == "__main__":

61 job = WorkerJob(entrypoint=start_session, jobctx=make_context)

62 job.start()

63Step 4.1: Generating a VideoSDK Meeting ID

To generate a meeting ID, use the following

curl command:1curl -X POST https://api.videosdk.live/v1/meetings \

2-H "Authorization: API_KEY" \

3-H "Content-Type: application/json" \

4-d '{}'

5This command will return a meeting ID that you can use to join or create a session.

Step 4.2: Creating the Custom Agent Class

The

MyVoiceAgent class is where you define the behavior of your voice agent. This class inherits from the Agent class and implements methods to handle entering and exiting a session.1class MyVoiceAgent(Agent):

2 def __init__(self):

3 super().__init__(instructions=agent_instructions)

4 async def on_enter(self): await self.session.say("Hello! How can I help?")

5 async def on_exit(self): await self.session.say("Goodbye!")

6Step 4.3: Defining the Core Pipeline

The

CascadingPipeline is central to processing audio input and generating responses. It integrates various plugins for STT, LLM, TTS, VAD, and turn detection, as detailed in the AI voice Agent core components overview

.1pipeline = CascadingPipeline(

2 stt=DeepgramSTT(model="nova-2", language="en"),

3 llm=OpenAILLM(model="gpt-4o"),

4 tts=ElevenLabsTTS(model="eleven_flash_v2_5"),

5 vad=SileroVAD(threshold=0.35),

6 turn_detector=TurnDetector(threshold=0.8)

7)

8Step 4.4: Managing the Session and Startup Logic

The

start_session function initializes the session, connects to the server, and manages the lifecycle of the agent. For more details on managing sessions, refer to AI voice Agent Sessions

.1async def start_session(context: JobContext):

2 agent = MyVoiceAgent()

3 conversation_flow = ConversationFlow(agent)

4 pipeline = CascadingPipeline(

5 stt=DeepgramSTT(model="nova-2", language="en"),

6 llm=OpenAILLM(model="gpt-4o"),

7 tts=ElevenLabsTTS(model="eleven_flash_v2_5"),

8 vad=SileroVAD(threshold=0.35),

9 turn_detector=TurnDetector(threshold=0.8)

10 )

11

12 session = AgentSession(

13 agent=agent,

14 pipeline=pipeline,

15 conversation_flow=conversation_flow

16 )

17

18 try:

19 await context.connect()

20 await session.start()

21 await asyncio.Event().wait()

22 finally:

23 await session.close()

24 await context.shutdown()

25Running and Testing the Agent

Step 5.1: Running the Python Script

To run your voice agent, execute the script using:

1python main.py

2This will start the agent and display a playground link in the console.

Step 5.2: Interacting with the Agent in the Playground

Use the playground link to interact with your agent. You can test voice commands and see how the agent responds in real-time.

Advanced Features and Customizations

Extending Functionality with Custom Tools

The VideoSDK framework allows you to extend the agent's capabilities by integrating custom tools and functionalities.

Exploring Other Plugins

While this tutorial uses specific plugins, you can explore other options for STT, LLM, and TTS to suit your needs.

Troubleshooting Common Issues

API Key and Authentication Errors

Ensure your API key is correctly set in the

.env file and that you have access to the VideoSDK services.Audio Input/Output Problems

Check your microphone and speaker settings to ensure they are correctly configured.

Dependency and Version Conflicts

Ensure all dependencies are installed with compatible versions as specified in the tutorial.

Conclusion

Summary of What You've Built

You've successfully built a scalable AI Voice Agent using the VideoSDK framework, capable of handling voice interactions efficiently. For deployment details, consult the

AI voice Agent deployment

guide.Next Steps and Further Learning

Explore additional features and plugins to enhance your agent's capabilities, and consider integrating with other systems for broader applications.

Want to level-up your learning? Subscribe now

Subscribe to our newsletter for more tech based insights

FAQ