Introduction to AI Voice Agents in BPO Companies

What is an AI Voice Agent

?

AI Voice Agents are intelligent systems designed to interact with users through voice commands. They leverage speech recognition, natural language processing, and speech synthesis technologies to understand and respond to user queries. These agents can automate routine tasks, provide information, and enhance customer service experiences.

Why are they important for the BPO industry?

In the BPO (Business Process Outsourcing) industry, AI Voice Agents play a crucial role in improving efficiency and reducing operational costs. They handle customer inquiries, perform call routing, collect data, and provide multilingual support, allowing human agents to focus on more complex issues. This automation leads to faster response times and improved customer satisfaction.

Core Components of a Voice Agent

- Speech-to-Text (STT): Converts spoken language into text, utilizing tools like the

Deepgram STT Plugin for voice agent

. - Text-to-Speech (TTS): Converts text responses into spoken language.

- Language Model (LLM): Processes the text to generate meaningful responses, often using the

OpenAI LLM Plugin for voice agent

. - Voice

Activity Detection

(VAD): Identifies when a user is speaking.

What You'll Build in This Tutorial

In this tutorial, you'll learn how to build a fully functional AI

Voice Agent

tailored for BPO companies using the VideoSDK framework. We'll cover everything from setting up the development environment to deploying and testing the agent.Architecture and Core Concepts

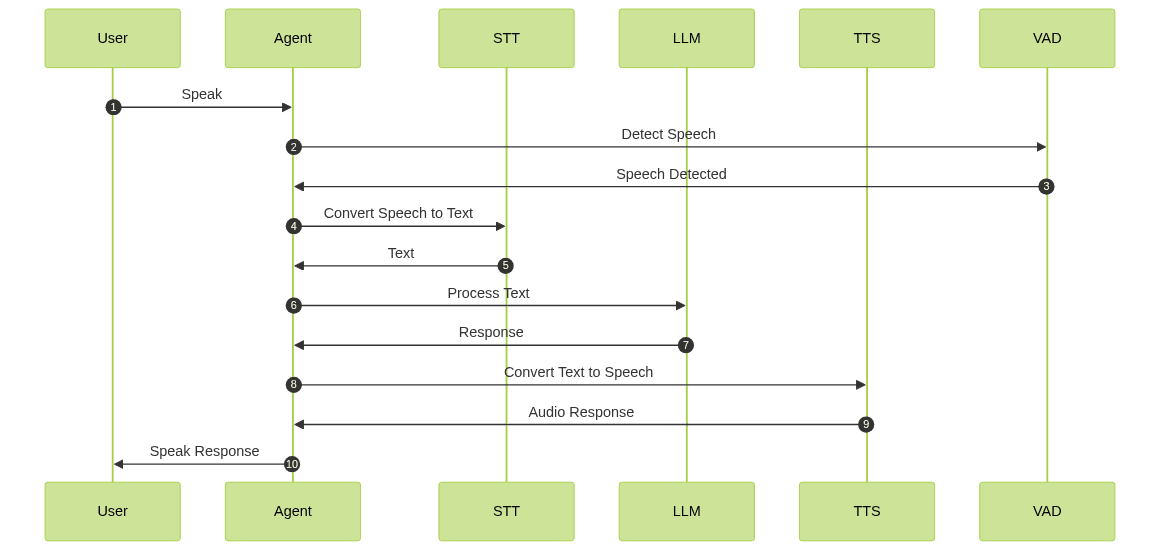

High-Level Architecture Overview

The architecture of an AI

Voice Agent

involves several components working together to process and respond to user inputs. Below is a high-level overview of the system:

Understanding Key Concepts in the VideoSDK Framework

- Agent: The core class representing your bot. It handles interactions and manages the conversation flow.

- CascadingPipeline: This defines the processing flow of audio inputs and outputs, including STT, LLM, and TTS modules.

- VAD & TurnDetector: These components determine when the agent should listen and respond, ensuring seamless interaction.

Setting Up the Development Environment

Prerequisites

Before you begin, ensure you have the following:

- Python 3.7 or higher

- Access to the VideoSDK platform

- API keys for Deepgram, OpenAI, and ElevenLabs

Step 1: Create a Virtual Environment

Create a virtual environment to manage dependencies:

1python -m venv venv

2source venv/bin/activate # On Windows use `venv\Scripts\activate`

3Step 2: Install Required Packages

Install the necessary packages using pip:

1pip install videosdk-agents videosdk-plugins

2Step 3: Configure API Keys in a .env file

Create a

.env file in your project directory to store your API keys:1DEEPGRAM_API_KEY=your_deepgram_api_key

2OPENAI_API_KEY=your_openai_api_key

3ELEVENLABS_API_KEY=your_elevenlabs_api_key

4Building the AI Voice Agent: A Step-by-Step Guide

Step 4.1: Generating a VideoSDK Meeting ID

To interact with the agent, you'll need a meeting ID. Use the VideoSDK API to generate one:

1# Assuming you have the VideoSDK API client set up

2meeting_id = videosdk.create_meeting_id()

3Step 4.2: Creating the Custom Agent Class

Define a custom agent class to handle user interactions:

1class MyVoiceAgent(Agent):

2 def __init__(self):

3 super().__init__(instructions=agent_instructions)

4 async def on_enter(self): await self.session.say("Hello! How can I help?")

5 async def on_exit(self): await self.session.say("Goodbye!")

6Step 4.3: Defining the Core Pipeline

Set up the

cascading pipeline

to process audio inputs and outputs:1pipeline = CascadingPipeline(

2 stt=DeepgramSTT(model="nova-2", language="en"),

3 llm=OpenAILLM(model="gpt-4o"),

4 tts=ElevenLabsTTS(model="eleven_flash_v2_5"),

5 vad=SileroVAD(threshold=0.35),

6 turn_detector=TurnDetector(threshold=0.8)

7)

8Step 4.4: Managing the Session and Startup Logic

Manage the

AI voice Agent Sessions

and define the startup logic:1async def start_session(context: JobContext):

2 agent = MyVoiceAgent()

3 conversation_flow = ConversationFlow(agent)

4

5 session = AgentSession(

6 agent=agent,

7 pipeline=pipeline,

8 conversation_flow=conversation_flow

9 )

10

11 try:

12 await context.connect()

13 await session.start()

14 await asyncio.Event().wait()

15 finally:

16 await session.close()

17 await context.shutdown()

18Running and Testing the Agent

Step 5.1: Running the Python Script

Execute the script to start the agent:

1python main.py

2Step 5.2: Interacting with the Agent in the Playground

Use the playground link printed in the console to interact with your AI Voice Agent. You can test various scenarios to see how the agent responds.

Advanced Features and Customizations

Extending Functionality with Custom Tools

Explore adding custom tools to enhance the agent's capabilities. This could include integrating additional APIs or custom logic for specific tasks.

Exploring Other Plugins

VideoSDK offers a range of plugins. Consider experimenting with different STT, TTS, or LLM plugins to tailor the agent to your needs.

Troubleshooting Common Issues

API Key and Authentication Errors

Ensure your API keys are correctly set in the

.env file and that your environment has access to these keys.Audio Input/Output Problems

Verify that your audio devices are properly configured and that the agent has permission to access them.

Dependency and Version Conflicts

Use a virtual environment to manage dependencies and ensure compatibility with the required package versions.

Conclusion

Summary of What You've Built

You've successfully built an AI Voice Agent tailored for BPO companies, leveraging the VideoSDK framework and various plugins for STT, LLM, and TTS.

Next Steps and Further Learning

Consider exploring advanced features, such as custom plugins or integrating the agent with other systems to expand its capabilities. For a comprehensive understanding, refer to the

AI voice Agent core components overview

.Want to level-up your learning? Subscribe now

Subscribe to our newsletter for more tech based insights

FAQ