1. Introduction to AI Voice Agents in ai voice call

What is an AI Voice Agent

?

An AI

Voice Agent

is an intelligent software system designed to interact with users through spoken language, simulating natural conversations over voice calls. These agents use advanced speech recognition, language understanding, and speech synthesis technologies to understand user intent, generate appropriate responses, and deliver them in a human-like voice.Why are they important for the ai voice call industry?

AI Voice Agents are transforming the ai voice call industry by automating customer support, appointment scheduling, information delivery, and more. They offer 24/7 availability, consistent service quality, and scalability, reducing operational costs and improving user experience. By leveraging the latest advances in AI, businesses can handle high call volumes, personalize interactions, and free up human agents for complex tasks.

Core Components of a Voice Agent

- Speech-to-Text (STT): Converts user speech into text.

- Language Model (LLM): Understands user intent and generates responses.

- Text-to-Speech (TTS): Synthesizes natural-sounding voice responses.

- Voice

Activity Detection

(VAD): Detects when the user starts and stops speaking. - Turn Detection: Determines when the agent should listen or speak.

What You'll Build in This Tutorial

In this tutorial, you will build a fully functional AI

Voice Agent

for ai voice call using Python and the VideoSDK AI Agents framework. The agent will be able to:- Hold a natural conversation with a user over a voice call.

- Answer general questions, guide users, and handle basic tasks.

- Run in a test playground for easy experimentation.

By the end, you'll have a ready-to-test voice agent you can extend for your own use cases.

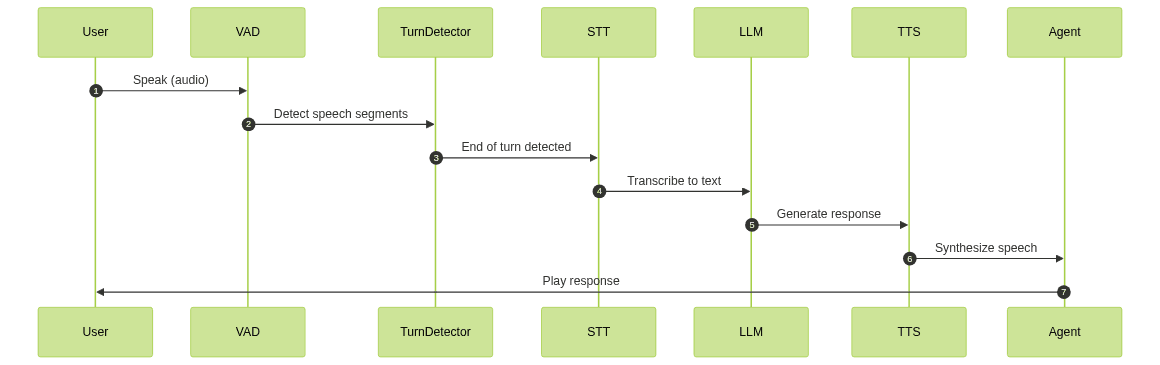

2. Architecture and Core Concepts

High-Level Architecture Overview

The AI Voice Agent follows a modular pipeline architecture. Here's how the data flows during a voice call:

- User speaks: Audio is streamed to the agent.

- Voice Activity Detection (VAD): Detects when the user is speaking.

- Turn Detection: Determines when the user has finished speaking.

- Speech-to-Text (STT): Converts audio to text.

- Language Model (LLM): Processes the text and generates a response.

- Text-to-Speech (TTS): Converts the response to audio.

- Agent speaks: The synthesized response is played to the user.

For a more detailed

AI voice Agent core components overview

, you can refer to the VideoSDK documentation, which breaks down each essential building block and their roles in the agent's workflow.Mermaid UML Sequence Diagram

Understanding Key Concepts in the VideoSDK Framework

- Agent: The core class that encapsulates your bot's behavior and instructions.

- CascadingPipeline: Orchestrates the flow of audio and text through STT, LLM, TTS, VAD, and Turn Detection components. To learn more about how this works, see the

Cascading pipeline in AI voice Agents

documentation. - VAD & TurnDetector: Ensure the agent listens and responds at the right moments, creating natural conversational turns. The

Turn detector for AI voice Agents

plugin is especially useful for managing conversational flow and detecting when a speaker has finished their turn.

By leveraging these abstractions, you can focus on your agent's logic while the framework handles the complexities of real-time audio processing.

3. Setting Up the Development Environment

Prerequisites

- Python 3.11+ (Recommended: Python 3.11 for best compatibility)

- A VideoSDK account (Sign up for free to access API keys and the dashboard)

- API keys for Deepgram (STT), OpenAI (LLM), and ElevenLabs (TTS)

Step 1: Create a Virtual Environment

It's best practice to use a virtual environment to manage dependencies.

1python3 -m venv venv

2source venv/bin/activate # On Windows: venv\Scripts\activate

3Step 2: Install Required Packages

Install the VideoSDK AI Agents framework and required plugins:

1pip install videosdk-agents videosdk-plugins-silero videosdk-plugins-turn-detector videosdk-plugins-deepgram videosdk-plugins-openai videosdk-plugins-elevenlabs

2Step 3: Configure API Keys in a .env File

Create a

.env file in your project directory and add your API keys:1VIDEOSDK_API_KEY=your_videosdk_api_key

2DEEPGRAM_API_KEY=your_deepgram_api_key

3OPENAI_API_KEY=your_openai_api_key

4ELEVENLABS_API_KEY=your_elevenlabs_api_key

5Replace the placeholder values with your actual credentials from each provider.

4. Building the AI Voice Agent: A Step-by-Step Guide

Let's dive into building your AI Voice Agent. First, here is the complete, runnable code.

1import asyncio, os

2from videosdk.agents import Agent, AgentSession, CascadingPipeline, JobContext, RoomOptions, WorkerJob, ConversationFlow

3from videosdk.plugins.silero import SileroVAD

4from videosdk.plugins.turn_detector import TurnDetector, pre_download_model

5from videosdk.plugins.deepgram import DeepgramSTT

6from videosdk.plugins.openai import OpenAILLM

7from videosdk.plugins.elevenlabs import ElevenLabsTTS

8from typing import AsyncIterator

9

10# Pre-downloading the Turn Detector model

11pre_download_model()

12

13agent_instructions = "You are a professional and friendly AI Voice Call Assistant. Your primary role is to facilitate seamless, natural-sounding voice conversations with users over the phone. You can answer general questions, provide information, assist with scheduling, and guide users through automated processes. Always speak clearly, use polite language, and confirm understanding when necessary. You must never collect sensitive personal information (such as credit card numbers or social security numbers), and you cannot provide legal, medical, or financial advice. If a user requests such information or advice, politely inform them that you are unable to assist and recommend contacting a qualified professional. Always respect user privacy and comply with data protection guidelines. If you are unsure about a request, escalate or suggest speaking with a human representative."

14

15class MyVoiceAgent(Agent):

16 def __init__(self):

17 super().__init__(instructions=agent_instructions)

18 async def on_enter(self): await self.session.say("Hello! How can I help?")

19 async def on_exit(self): await self.session.say("Goodbye!")

20

21async def start_session(context: JobContext):

22 # Create agent and conversation flow

23 agent = MyVoiceAgent()

24 conversation_flow = ConversationFlow(agent)

25

26 # Create pipeline

27 pipeline = CascadingPipeline(

28 stt=DeepgramSTT(model="nova-2", language="en"),

29 llm=OpenAILLM(model="gpt-4o"),

30 tts=ElevenLabsTTS(model="eleven_flash_v2_5"),

31 vad=SileroVAD(threshold=0.35),

32 turn_detector=TurnDetector(threshold=0.8)

33 )

34

35 session = AgentSession(

36 agent=agent,

37 pipeline=pipeline,

38 conversation_flow=conversation_flow

39 )

40

41 try:

42 await context.connect()

43 await session.start()

44 # Keep the session running until manually terminated

45 await asyncio.Event().wait()

46 finally:

47 # Clean up resources when done

48 await session.close()

49 await context.shutdown()

50

51def make_context() -> JobContext:

52 room_options = RoomOptions(

53 # room_id="YOUR_MEETING_ID", # Set to join a pre-created room; omit to auto-create

54 name="VideoSDK Cascaded Agent",

55 playground=True

56 )

57

58 return JobContext(room_options=room_options)

59

60if __name__ == "__main__":

61 job = WorkerJob(entrypoint=start_session, jobctx=make_context)

62 job.start()

63Now, let's break down each part of the code to understand how it works.

Step 4.1: Generating a VideoSDK Meeting ID

Before starting the agent, you need a meeting (room) for the voice call. You can auto-generate a meeting ID or create one manually via the VideoSDK API.

To create a meeting ID using curl:

1curl -X POST "https://api.videosdk.live/v2/rooms" \

2 -H "Authorization: YOUR_VIDEOSDK_API_KEY"

3Replace

YOUR_VIDEOSDK_API_KEY with your actual VideoSDK API key. The response will include a roomId you can use.In the code above, the

RoomOptions uses playground=True to auto-create a room and provide a test URL. For production, you'd set room_id to your generated meeting ID.Step 4.2: Creating the Custom Agent Class (MyVoiceAgent)

Let's look at how to define your agent's persona and behavior.

1agent_instructions = "You are a professional and friendly AI Voice Call Assistant. Your primary role is to facilitate seamless, natural-sounding voice conversations with users over the phone. You can answer general questions, provide information, assist with scheduling, and guide users through automated processes. Always speak clearly, use polite language, and confirm understanding when necessary. You must never collect sensitive personal information (such as credit card numbers or social security numbers), and you cannot provide legal, medical, or financial advice. If a user requests such information or advice, politely inform them that you are unable to assist and recommend contacting a qualified professional. Always respect user privacy and comply with data protection guidelines. If you are unsure about a request, escalate or suggest speaking with a human representative."

2

3class MyVoiceAgent(Agent):

4 def __init__(self):

5 super().__init__(instructions=agent_instructions)

6 async def on_enter(self): await self.session.say("Hello! How can I help?")

7 async def on_exit(self): await self.session.say("Goodbye!")

8- The

agent_instructionsstring defines the agent's persona, capabilities, and constraints. - The

MyVoiceAgentclass inherits fromAgentand customizes the greeting and farewell behavior withon_enterandon_exit.

Step 4.3: Defining the Core Pipeline (CascadingPipeline and Plugins)

The pipeline handles all audio and language processing.

1pipeline = CascadingPipeline(

2 stt=DeepgramSTT(model="nova-2", language="en"),

3 llm=OpenAILLM(model="gpt-4o"),

4 tts=ElevenLabsTTS(model="eleven_flash_v2_5"),

5 vad=SileroVAD(threshold=0.35),

6 turn_detector=TurnDetector(threshold=0.8)

7)

8- DeepgramSTT: Converts user speech to text.

- OpenAILLM: Processes the text and generates a response.

- ElevenLabsTTS: Converts the response text to natural-sounding speech.

- SileroVAD: Detects when the user is speaking.

- TurnDetector: Determines when the user has finished speaking.

This modular pipeline makes it easy to swap out components as needed. If you want to experiment with your agent in a safe and interactive environment, the

AI Agent playground

is a great resource for testing and refining your setup.Step 4.4: Managing the Session and Startup Logic

The session orchestrates the conversation and manages resources.

1async def start_session(context: JobContext):

2 agent = MyVoiceAgent()

3 conversation_flow = ConversationFlow(agent)

4 pipeline = CascadingPipeline(

5 stt=DeepgramSTT(model="nova-2", language="en"),

6 llm=OpenAILLM(model="gpt-4o"),

7 tts=ElevenLabsTTS(model="eleven_flash_v2_5"),

8 vad=SileroVAD(threshold=0.35),

9 turn_detector=TurnDetector(threshold=0.8)

10 )

11 session = AgentSession(agent=agent, pipeline=pipeline, conversation_flow=conversation_flow)

12 try:

13 await context.connect()

14 await session.start()

15 await asyncio.Event().wait()

16 finally:

17 await session.close()

18 await context.shutdown()

19

20def make_context() -> JobContext:

21 room_options = RoomOptions(

22 name="VideoSDK Cascaded Agent",

23 playground=True

24 )

25 return JobContext(room_options=room_options)

26

27if __name__ == "__main__":

28 job = WorkerJob(entrypoint=start_session, jobctx=make_context)

29 job.start()

30start_sessionsets up the agent, pipeline, and session, then starts the call.make_contextconfigures the room and enables the test playground.- The

__main__block starts the agent as a worker job.

5. Running and Testing the Agent

Step 5.1: Running the Python Script

Make sure your

.env file is configured and your virtual environment is activated. Then, run:1python main.py

2The script will print a test playground URL in the console.

Step 5.2: Interacting with the Agent in the Playground

- Find the playground link in your terminal output (it will look like a VideoSDK meeting URL).

- Open the link in your browser.

- Join as a participant and use your microphone to speak with the agent.

- The agent will greet you and respond to your questions in real time.

To gracefully shut down the agent, press

Ctrl+C in your terminal. This ensures all resources are cleaned up properly.6. Advanced Features and Customizations

Extending Functionality with Custom Tools

You can add custom tools (function_tool) to extend your agent's capabilities, such as booking appointments, accessing databases, or integrating with external APIs.

Exploring Other Plugins

The VideoSDK AI Agents framework supports a variety of plugins:

- STT: Cartesia (best), Deepgram (cost-effective), Rime (low-cost)

- TTS: ElevenLabs (best quality), Deepgram (cost-effective)

- LLM: OpenAI GPT-4, Google Gemini

- VAD: SileroVAD

- Turn Detection: TurnDetector

Experiment with different combinations to optimize for quality, speed, or cost.

7. Troubleshooting Common Issues

API Key and Authentication Errors

- Double-check your

.envfile for typos or missing keys. - Ensure your API keys are active and have the correct permissions.

Audio Input/Output Problems

- Make sure your microphone and speakers are working.

- Test in the playground environment for browser compatibility.

Dependency and Version Conflicts

- Use Python 3.11+ and a fresh virtual environment.

- Run

pip install --upgradeto ensure all packages are up to date.

8. Conclusion

In this tutorial, you've built a production-ready AI Voice Agent for ai voice call using Python and the VideoSDK AI Agents framework. You learned how to set up the environment, configure plugins, and deploy your agent in a test playground.

From here, you can extend your agent with custom tools, integrate with CRMs, or deploy to production. For guidance on

AI voice Agent deployment

, check out the VideoSDK documentation, which covers best practices for launching your agent in real-world scenarios. Explore the VideoSDK documentation and experiment with different plugin combinations to create powerful, conversational voice applications.Want to level-up your learning? Subscribe now

Subscribe to our newsletter for more tech based insights

FAQ