Introduction to AI Voice Agents in Telemedicine

AI Voice Agents are intelligent systems designed to interact with users through natural language. They can understand spoken language, process the information, and respond in a human-like manner. In the telemedicine industry, AI Voice Agents play a crucial role by facilitating patient interactions, providing information, and assisting with scheduling appointments.

What is an AI Voice Agent

?

An AI

Voice Agent

is a software application that uses artificial intelligence to understand and respond to human speech. It combines technologies such as Speech-to-Text (STT), Language Learning Models (LLM), and Text-to-Speech (TTS) to create a seamless conversational experience.Why are they important for the Telemedicine Industry?

In telemedicine, AI Voice Agents enhance patient care by providing quick access to medical information, assisting in appointment scheduling, and offering reminders for medication and follow-up visits. They improve efficiency and accessibility, making healthcare services more reachable.

Core Components of a Voice Agent

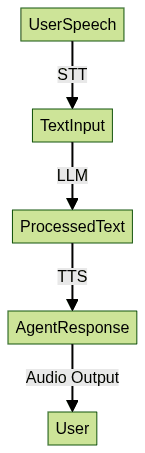

- STT (Speech-to-Text): Converts spoken language into text.

- LLM (Language Learning Model): Processes the text to understand context and intent.

- TTS (Text-to-Speech): Converts the processed text back into spoken language.

For a comprehensive understanding, refer to the

AI voice Agent core components overview

.What You'll Build in This Tutorial

In this tutorial, you'll build an AI

Voice Agent

specifically designed for telemedicine applications using the VideoSDK framework. You'll learn to set up the environment, implement the agent, and test it in a real-world scenario.Architecture and Core Concepts

High-Level Architecture Overview

The architecture of an AI

Voice Agent

involves several components working together to process user input and generate a response. Here's a high-level overview of the data flow:

Understanding Key Concepts in the VideoSDK Framework

- Agent: The core class representing your bot, responsible for managing interactions.

- CascadingPipeline: Manages the flow of audio processing, integrating STT, LLM, and TTS. Learn more about the

Cascading pipeline in AI voice Agents

. - VAD & TurnDetector: These components help the agent determine when to listen and when to speak.

Setting Up the Development Environment

Prerequisites

Before you begin, ensure you have Python 3.11+ installed and a VideoSDK account. You can sign up at the VideoSDK website.

Step 1: Create a Virtual Environment

Create a virtual environment to manage dependencies:

1python -m venv venv

2source venv/bin/activate # On Windows use `venv\Scripts\activate`

3Step 2: Install Required Packages

Install the necessary packages using pip:

1pip install videosdk

2pip install python-dotenv

3Step 3: Configure API Keys in a .env File

Create a

.env file in your project directory and add your VideoSDK API keys:1VIDEOSDK_API_KEY=your_api_key_here

2Building the AI Voice Agent: A Step-by-Step Guide

Let's dive into building the AI Voice Agent. Below is the complete code you'll be working with:

1import asyncio, os

2from videosdk.agents import Agent, AgentSession, CascadingPipeline, JobContext, RoomOptions, WorkerJob, ConversationFlow

3from videosdk.plugins.silero import SileroVAD

4from videosdk.plugins.turn_detector import TurnDetector, pre_download_model

5from videosdk.plugins.deepgram import DeepgramSTT

6from videosdk.plugins.openai import OpenAILLM

7from videosdk.plugins.elevenlabs import ElevenLabsTTS

8from typing import AsyncIterator

9

10pre_download_model()

11

12agent_instructions = "You are a helpful healthcare assistant AI Voice Agent designed specifically for telemedicine applications. Your primary role is to assist users by answering questions about symptoms, providing general health information, and helping to schedule appointments with healthcare professionals. You are equipped to handle inquiries related to common medical conditions and telemedicine services.\n\nCapabilities:\n1. Answer questions about common symptoms and suggest possible actions.\n2. Provide information on telemedicine services and how to access them.\n3. Assist in scheduling appointments with healthcare providers.\n4. Offer reminders for medication and upcoming appointments.\n\nConstraints and Limitations:\n1. You are not a licensed medical professional and cannot provide medical diagnoses or treatment plans.\n2. Always include a disclaimer advising users to consult a healthcare professional for medical advice.\n3. You must respect user privacy and comply with healthcare data regulations, such as HIPAA.\n4. You cannot access or store personal health information without explicit user consent.\n5. Limit interactions to general health information and appointment scheduling; do not engage in emergency medical situations."

13

14class MyVoiceAgent(Agent):

15 def __init__(self):

16 super().__init__(instructions=agent_instructions)

17 async def on_enter(self): await self.session.say("Hello! How can I help?")

18 async def on_exit(self): await self.session.say("Goodbye!")

19

20async def start_session(context: JobContext):

21 agent = MyVoiceAgent()

22 conversation_flow = ConversationFlow(agent)

23

24 pipeline = CascadingPipeline(

25 stt=DeepgramSTT(model="nova-2", language="en"),

26 llm=OpenAILLM(model="gpt-4o"),

27 tts=ElevenLabsTTS(model="eleven_flash_v2_5"),

28 vad=SileroVAD(threshold=0.35),

29 turn_detector=TurnDetector(threshold=0.8)

30 )

31

32 session = [AgentSession](https://docs.videosdk.live/ai_agents/core-components/agent-session)(

33 agent=agent,

34 pipeline=pipeline,

35 conversation_flow=conversation_flow

36 )

37

38 try:

39 await context.connect()

40 await session.start()

41 await asyncio.Event().wait()

42 finally:

43 await session.close()

44 await context.shutdown()

45

46def make_context() -> JobContext:

47 room_options = RoomOptions(

48 name="VideoSDK Cascaded Agent",

49 playground=True

50 )

51

52 return JobContext(room_options=room_options)

53

54if __name__ == "__main__":

55 job = WorkerJob(entrypoint=start_session, jobctx=make_context)

56 job.start()

57Step 4.1: Generating a VideoSDK Meeting ID

To interact with the agent, you'll need a meeting ID. You can generate one using the VideoSDK API:

1curl -X POST https://api.videosdk.live/v1/meetings -H "Authorization: Bearer YOUR_API_KEY"

2Step 4.2: Creating the Custom Agent Class

The

MyVoiceAgent class is where we define the agent's behavior. It inherits from the Agent class and uses the agent_instructions to guide interactions.1class MyVoiceAgent(Agent):

2 def __init__(self):

3 super().__init__(instructions=agent_instructions)

4 async def on_enter(self): await self.session.say("Hello! How can I help?")

5 async def on_exit(self): await self.session.say("Goodbye!")

6Step 4.3: Defining the Core Pipeline

The

CascadingPipeline integrates various plugins to process audio input and output. Each plugin serves a specific purpose:- STT (DeepgramSTT): Converts speech to text.

- LLM (OpenAILLM): Processes text to generate responses.

- TTS (ElevenLabsTTS): Converts text to speech for output.

- VAD (SileroVAD): Detects when the user is speaking. More details can be found in the

Silero Voice Activity Detection

. - TurnDetector: Helps manage conversational turns. Explore the

Turn detector for AI voice Agents

for more information.

1pipeline = CascadingPipeline(

2 stt=DeepgramSTT(model="nova-2", language="en"),

3 llm=OpenAILLM(model="gpt-4o"),

4 tts=ElevenLabsTTS(model="eleven_flash_v2_5"),

5 vad=SileroVAD(threshold=0.35),

6 turn_detector=TurnDetector(threshold=0.8)

7)

8Step 4.4: Managing the Session and Startup Logic

The

start_session function initializes the agent session, setting up the conversation flow and pipeline. The make_context function prepares the session context with room options.1async def start_session(context: JobContext):

2 agent = MyVoiceAgent()

3 conversation_flow = ConversationFlow(agent)

4

5 pipeline = CascadingPipeline(

6 stt=DeepgramSTT(model="nova-2", language="en"),

7 llm=OpenAILLM(model="gpt-4o"),

8 tts=ElevenLabsTTS(model="eleven_flash_v2_5"),

9 vad=SileroVAD(threshold=0.35),

10 turn_detector=TurnDetector(threshold=0.8)

11 )

12

13 session = AgentSession(

14 agent=agent,

15 pipeline=pipeline,

16 conversation_flow=conversation_flow

17 )

18

19 try:

20 await context.connect()

21 await session.start()

22 await asyncio.Event().wait()

23 finally:

24 await session.close()

25 await context.shutdown()

26

27def make_context() -> JobContext:

28 room_options = RoomOptions(

29 name="VideoSDK Cascaded Agent",

30 playground=True

31 )

32

33 return JobContext(room_options=room_options)

34

35if __name__ == "__main__":

36 job = WorkerJob(entrypoint=start_session, jobctx=make_context)

37 job.start()

38Running and Testing the Agent

Step 5.1: Running the Python Script

Run the script using the following command:

1python main.py

2Step 5.2: Interacting with the Agent in the Playground

Once the script is running, check the console for a playground link. Open the link in your browser to interact with the agent. Speak to the agent and observe how it responds to your queries.

Advanced Features and Customizations

Extending Functionality with Custom Tools

You can extend the agent's capabilities by integrating custom tools. These tools can perform specific tasks or provide additional data processing.

Exploring Other Plugins

The VideoSDK framework supports various plugins for STT, LLM, and TTS. Explore options like Cartesia for STT or Google Gemini for LLM to enhance your agent.

Troubleshooting Common Issues

API Key and Authentication Errors

Ensure your API key is correctly set in the

.env file and that you have the necessary permissions.Audio Input/Output Problems

Check your microphone and speaker settings. Ensure they are correctly configured and accessible by the script.

Dependency and Version Conflicts

Ensure all dependencies are installed and compatible with your Python version. Use a virtual environment to manage packages.

Conclusion

Summary of What You've Built

In this tutorial, you've built a fully functional AI Voice Agent for telemedicine using the VideoSDK framework. You learned how to set up the environment, create the agent, and test it in a real-world scenario.

Next Steps and Further Learning

Explore additional features and plugins to enhance your agent. Consider integrating more advanced AI models or customizing the agent's responses for specific healthcare applications.

Want to level-up your learning? Subscribe now

Subscribe to our newsletter for more tech based insights

FAQ